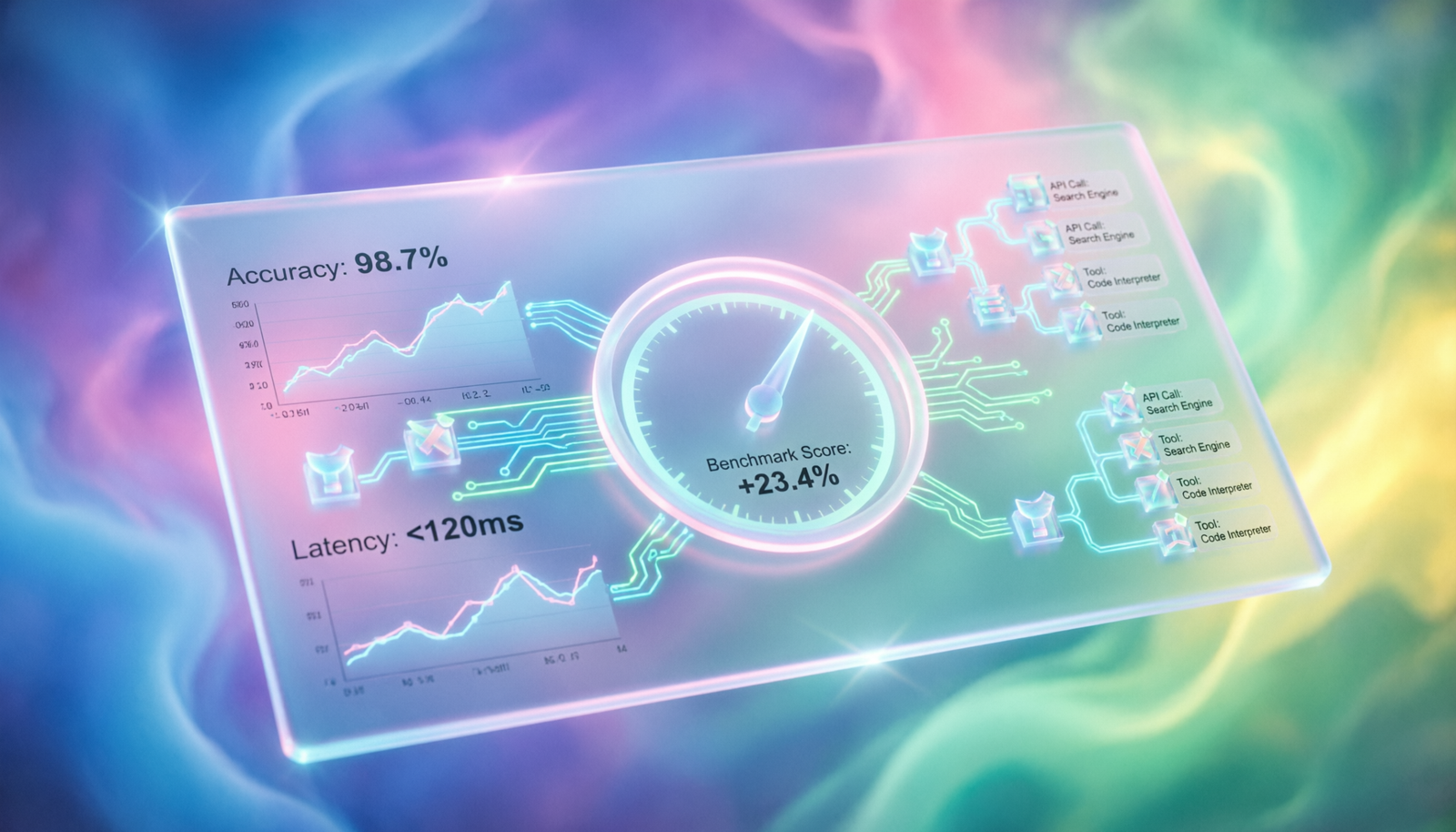

Benchmarking Agent Performance

with LangSmith

Measure, trace, and iterate on your AI agents with precision — turning opaque LLM chains into quantifiable, reproducible experiments.

Explore the GuideWhat is LangSmith?

LangSmith is an observability and evaluation platform built by LangChain to help engineers develop, debug, test, and monitor LLM-powered applications. At its core it wraps your chains and agents with deep tracing — capturing every prompt, token, tool call, and latency number in a searchable, replay-able log.

Unlike generic monitoring tools, LangSmith is purpose-built for the non-deterministic nature of language models: you can annotate runs, assemble evaluation datasets from real traffic, and run automated evaluators that score each trace on correctness, faithfulness, or any custom rubric you define.

Why Benchmark Agent Performance?

Agents are inherently stochastic — the same input can produce different tool-use paths, reasoning traces, and final answers. Without a rigorous evaluation loop, “it seemed to work in testing” becomes your only quality signal.

Systematic benchmarking lets you confidently answer: Did my prompt change improve accuracy? Does GPT-4o outperform Claude 3.5 Sonnet on my specific task? Did the latest LangChain update regress my retrieval agent? LangSmith makes this answerable with data, not intuition.

The Evaluation Loop

Instrument & Trace

Wrap your agent with the LangSmith client. Every run — tool calls, sub-chains, LLM calls — is captured as a nested trace tree automatically via the @traceable decorator or LangChain callbacks.

Build an Evaluation Dataset

Curate input–output pairs from production traffic, hand-written golden examples, or synthetic generation. Datasets are versioned so benchmarks remain reproducible over time.

Define Evaluators

Choose from built-in LangChain evaluators (exact match, embedding similarity, LLM-as-judge) or write custom Python functions that return a score and optional feedback string per run.

Run & Compare Experiments

Execute evaluate() to batch-run your agent over the dataset. Results land in the LangSmith UI as an experiment — compare pass rates, latency, and cost across model variants side-by-side.

Running Your First Benchmark

Below is a minimal but complete example: tracing an agent, constructing a dataset, and running an LLM-as-judge evaluation in under 40 lines.

import os from langsmith import Client, traceable, evaluate from langchain_openai import ChatOpenAI from langchain.agents import AgentExecutor, create_openai_tools_agent # ── 1. Instrument your agent ────────────────────────────── @traceable(run_type="chain", name="research-agent") def run_agent(inputs: dict) -> dict: result = executor.invoke(inputs["question"]) return {"answer": result["output"]} # ── 2. Define an LLM-as-judge evaluator ────────────────── def correctness_evaluator(run, example): judge = ChatOpenAI(model="gpt-4o-mini", temperature=0) prompt = f""" Rate the answer 0-1 for factual correctness. Question: {example.inputs['question']} Reference: {example.outputs['answer']} Agent: {run.outputs['answer']} Respond with ONLY a float between 0 and 1. """ score = float(judge.invoke(prompt).content.strip()) return {"key": "correctness", "score": score} # ── 3. Run the benchmark ────────────────────────────────── client = Client() results = evaluate( run_agent, data="my-agent-dataset-v3", evaluators=[correctness_evaluator], experiment_prefix="gpt4o-vs-claude35", max_concurrency=8, )

Evaluator Comparison

| Evaluator | Best For | Cost | Type |

|---|---|---|---|

| Exact Match | Closed-form Q&A, classification labels | Free | Deterministic |

| Embedding Similarity | Semantic answer equivalence | Low | Embedding |

| LLM-as-Judge | Open-ended answers, reasoning quality | Medium | LLM |

| Trajectory Eval | Multi-step agent tool-use paths | Medium | LLM |

| Custom Python | Domain-specific rules, regex, SQL checks | Free | Programmatic |

| Human Annotation | Ground truth labeling, golden datasets | High | Manual |

Tips for Reliable Benchmarks

Version your datasets. Never mutate a dataset mid-experiment. Create a new named version so historical experiment results remain reproducible six months later when you revisit them.

Run at least 30 examples per experiment. LLM outputs are noisy. With fewer examples, a 2-point accuracy swing can be pure variance. Aim for statistical significance before shipping a prompt change.

Track cost alongside quality. LangSmith records token usage per run. A model that scores 5% higher but costs 3× more may not be the right production choice — surface both dimensions in your experiment views.

Use real production inputs. Synthetic examples often miss the long tail of weird user phrasing that breaks agents. Seed your dataset with logged production queries, then filter to interesting failure cases.