Deploying

Scalable

Agents

A production-grade guide to architecting, deploying, and scaling AI agents across distributed infrastructure — from single-node prototypes to enterprise-grade agent fleets.

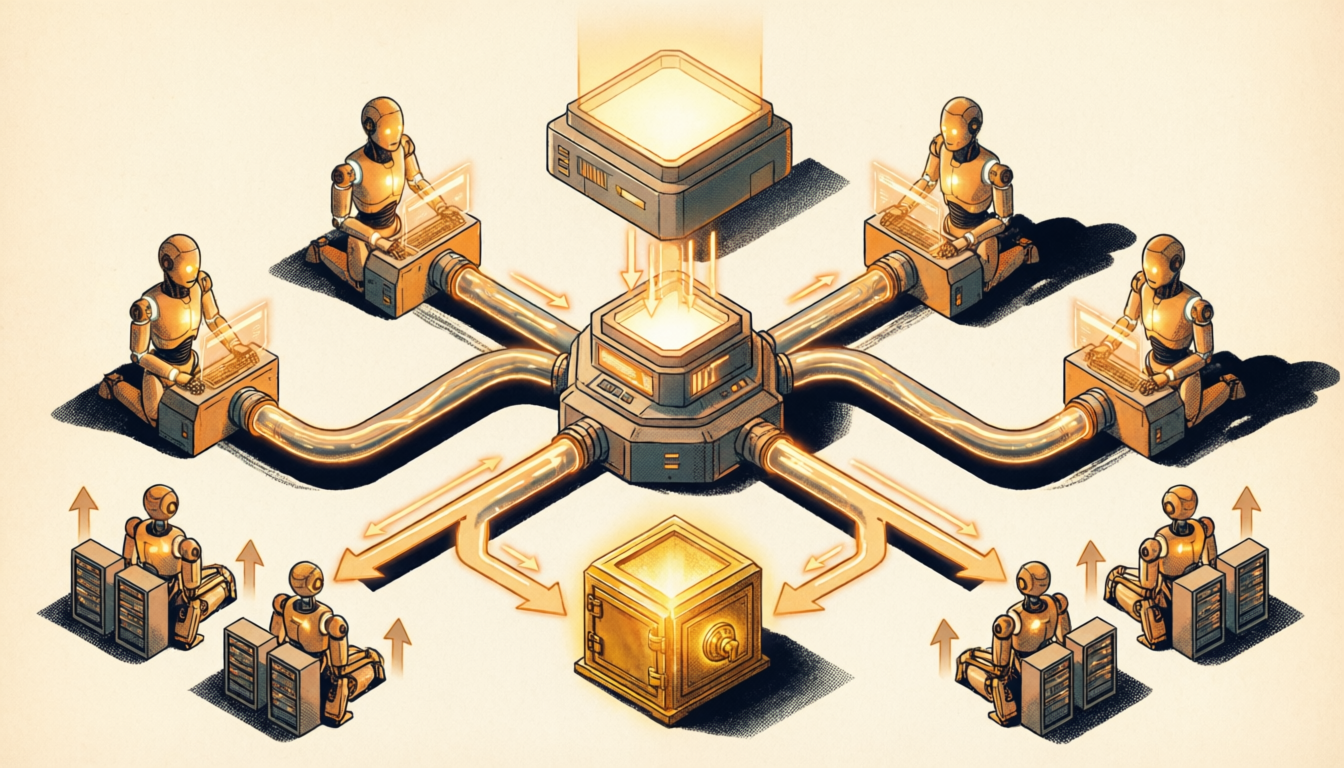

What Are Scalable Agents?

The core shift is from one agent doing everything sequentially to many specialized agents working in parallel, orchestrated by a central dispatcher. This unlocks enterprise-grade throughput, fault tolerance, and cost efficiency at scale.

Deployment Architecture Flow

Depth

OK?

Six Core Pillars

Orchestration Layer

The central coordinator. Accepts tasks, routes them to the right agent type, tracks in-flight jobs, handles retries, and aggregates results. Can be built with Temporal, Celery, or custom Kafka consumers.

Stateless Agent Workers

Each agent instance holds no persistent state between tasks. All context is passed in the task payload or fetched from the state store. This enables perfect horizontal scaling — just add more containers.

Message Queue

Decouples producers (clients) from consumers (agents). Acts as a buffer during traffic spikes. Enables durable delivery, priority routing, dead-letter queues, and at-least-once processing semantics.

Shared State Store

Centralized storage for agent memory, tool outputs, and task results. Agents read/write to Redis, DynamoDB, or Postgres — never to local memory — ensuring any worker can pick up a paused task.

Observability Stack

Full-stack visibility: distributed tracing (OpenTelemetry), metrics (Prometheus/Grafana), structured logs (Loki), and alerting. Every agent action, token count, latency, and error is captured and queryable.

Auto-Scaler

Dynamically adjusts the number of agent workers based on queue depth, task latency, and CPU/memory metrics. Kubernetes HPA, KEDA, or custom controllers handle provisioning and teardown.

Scaling Design Patterns

Real-World Examples

Enterprise Document Processing

- 110,000 PDF contracts uploaded to S3 bucket, triggers SQS events

- 2Orchestrator fans out one task per document to the worker pool

- 350 parallel agents extract clauses, dates, and risk flags simultaneously

- 4Results written to shared Postgres DB as each agent completes

- 5Fan-in aggregator builds final report, triggers notification to user

E-commerce Customer Support Fleet

- 1Customer messages arrive via webhook into Redis queue

- 2Pool of 20 support agents pull tasks as they become free

- 3Each agent fetches customer order history from shared store

- 4Resolves issue autonomously or escalates to human queue

- 5KEDA auto-scales pool from 5→50 agents during peak hours

Research Pipeline Agent System

- 1Nightly cron triggers research tasks for 500 competitor companies

- 2Stage 1 agents: web search + scrape (search-specialist workers)

- 3Stage 2 agents: summarize and extract insights (analysis workers)

- 4Stage 3 agents: cross-reference and score competitive threats

- 5Final agent compiles executive brief, emails stakeholders by 7am

CI/CD Code Review Agent Fleet

- 1New PR opened on GitHub triggers webhook → orchestrator

- 2Supervisor agent splits diff into file-level review tasks

- 3Specialist sub-agents run in parallel: security, style, logic, tests

- 4Each sub-agent posts inline comments via GitHub API

- 5Supervisor collects all reviews, posts final summary + approve/request changes

Financial Data Monitoring Agents

- 1Kafka streams market events (price changes, news, filings) continuously

- 2Event router dispatches each signal type to specialized agent pool

- 3Agents evaluate signals against portfolio rules in shared state

- 4High-priority signals trigger immediate alerts with analysis

- 5Hourly summary agents aggregate findings into digest reports

Multi-Region Global Agent Deployment

- 1API gateway geo-routes requests to nearest regional cluster

- 2Each region runs an independent agent pool (US, EU, APAC)

- 3Shared state synced via global Redis or CockroachDB cluster

- 4Health checks detect region failure → traffic rerouted automatically

- 5Global orchestrator ensures no duplicate task execution across regions

Worker Pool in Python

# Scalable Agent Worker Pool — Celery + Redis + Claude import anthropic from celery import Celery from redis import Redis import json, time # ── Infrastructure Setup ────────────────────────────────── app = Celery("agent_pool", broker="redis://localhost:6379/0", backend="redis://localhost:6379/1") store = Redis(host="localhost", port=6379, db=2) # shared state client = anthropic.Anthropic() # ── Agent Worker (runs on N containers) ─────────────────── @app.task(bind=True, max_retries=3, autoretry_for=(Exception,), retry_backoff=True) def run_agent_task(self, task_id: str, task_payload: dict) -> dict: """Stateless agent worker — fetches context, runs LLM, stores result.""" # 1. Load shared context from state store ctx_raw = store.get(f"ctx:{task_payload['session_id']}") context = json.loads(ctx_raw) if ctx_raw else [] # 2. Build message history + new task messages = context + [{ "role": "user", "content": task_payload["instruction"] }] # 3. Call the LLM (stateless — any worker can run any task) response = client.messages.create( model = "claude-opus-4-6", max_tokens= 1024, system = task_payload.get("system_prompt", "You are a helpful agent."), messages = messages ) result_text = response.content[0].text # 4. Persist result + updated context back to shared store updated_ctx = messages + [{"role": "assistant", "content": result_text}] store.setex(f"ctx:{task_payload['session_id']}", 3600, json.dumps(updated_ctx)) # TTL 1hr result = {"task_id": task_id, "output": result_text, "tokens": response.usage.output_tokens, "worker": self.request.hostname} store.setex(f"result:{task_id}", 7200, json.dumps(result)) return result # ── Dispatcher (orchestrator side) ──────────────────────── def dispatch_tasks(tasks: list[dict]) -> list: """Fan-out N tasks to the worker pool in parallel.""" jobs = [ run_agent_task.apply_async( args=[t["id"], t], queue=t.get("priority", "default") # route by priority ) for t in tasks ] # Fan-in: wait for all results return [job.get(timeout=120) for job in jobs] # ── Example: dispatch 5 parallel tasks ─────────────────── if __name__ == "__main__": tasks = [ {"id": f"task-{i}", "session_id": "sess-001", "instruction": f"Analyze document {i} and extract key terms", "priority": "high" if i == 0 else "default"} for i in range(5) ] results = dispatch_tasks(tasks) for r in results: print(f"[{r['worker']}] Task {r['task_id']} → {r['tokens']} tokens") # Deploy N workers: celery -A agent_pool worker --concurrency=10 -Q high,default # Scale: docker-compose up --scale worker=50

Key Challenges

Multiple agents writing to shared state can cause race conditions. Use optimistic locking, atomic Redis operations, or event sourcing to ensure consistency without bottlenecks.

100 parallel agents make 100× the LLM calls. Implement prompt caching, token budgets per task, model tiering (small models for simple tasks), and strict cost alerts per job type.

With retries and at-least-once delivery, agents may execute the same task twice. Design all agent actions to be idempotent using task IDs and deduplication keys in the state store.

When a task fails across 30 workers, finding the root cause is hard. Correlate logs by task_id with distributed tracing (Jaeger/OTLP). Every LLM call must carry trace context headers.

Spinning up new containers adds latency during bursts. Maintain a minimum warm pool of agents always running, and use pre-warming strategies triggered by queue depth thresholds.

Agents operating with real tools at scale amplify blast radius. Run each agent in an isolated sandbox. Scope API keys per task type. Log all tool calls to an immutable audit trail.