🧠 Managing Agentic Memory

How AI agents store, retrieve, and manage information across tasks and time

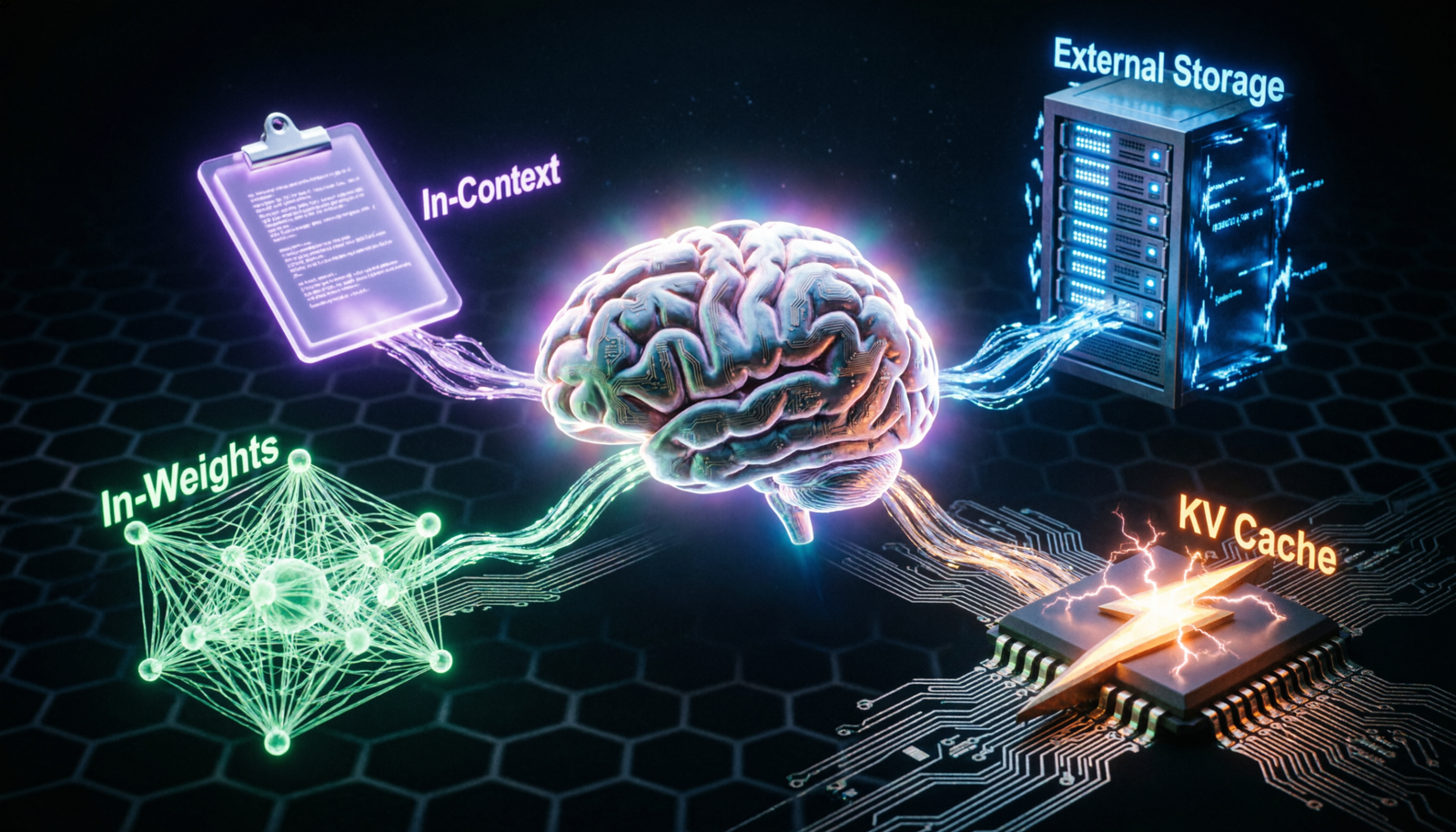

📦 Types of Agent Memory

In-Context Memory

Information held in the active prompt window — temporary and session-scoped.

VolatileExternal Memory

Databases, vector stores, or files queried via tools at runtime.

PersistentIn-Weights Memory

Knowledge baked into model parameters during training or fine-tuning.

StaticKV Cache Memory

Attention key-value pairs cached for speed within inference sessions.

Ephemeral🔄 Agentic Memory Flow

🛠️ Memory Management Strategies

🗜️ Context Compression

Summarize old conversation turns to stay within token limits while preserving meaning.

🔎 Retrieval-Augmented Generation

Use vector similarity search to pull relevant past facts into context on demand.

🏷️ Episodic Memory Tagging

Tag memories with metadata (time, topic, importance) to enable filtered recall.

🧹 Memory Pruning

Periodically evict stale, low-relevance, or contradicted memories to keep quality high.

🔗 Working Memory Buffer

Maintain a short-term scratchpad for intermediate results during multi-step tasks.

📊 Memory Consolidation

Merge related episodic memories into generalized semantic knowledge over time.

⚠️ Challenges & Solutions

Context Window Limits

LLMs have finite token windows — long histories simply won’t fit.

✅ Use compression, summarization, or sliding window approaches.

Memory Hallucination

Agent “remembers” facts that were never actually stored or learned.

✅ Ground retrieval in verifiable sources; log all memory writes.

Retrieval Latency

Querying external memory on every step slows the agent loop.

✅ Cache frequent queries; use approximate nearest-neighbor search (FAISS, HNSW).

Privacy & Security

Persistent memory can leak sensitive user data across sessions.

✅ Encrypt memory stores; scope memories to users with strict ACLs.

Memory Drift

Outdated beliefs persist and conflict with newer, accurate information.

✅ Add versioning and contradiction detection; prefer recent memories.