Prompt Optimization

& Iterative Refinement

The craft of shaping language into precise instructions — turning vague requests into outputs that consistently exceed expectations through deliberate, structured iteration.

Role & Context Priming

Assign the model an expert persona before your task. “You are a senior UX researcher…” fundamentally shifts output quality by anchoring the model’s probabilistic space toward domain expertise.

FoundationalFew-Shot Exemplars

Provide 2–5 worked examples in the prompt body. The model infers format, tone, and reasoning patterns from demonstrations far more reliably than explicit description alone.

High ImpactChain-of-Thought

Append “Think step by step” or embed a reasoning scaffold. Forcing intermediate reasoning dramatically improves accuracy on multi-step logic, math, and causal inference tasks.

ReasoningConstraint Layering

Define what to do and what to avoid. Negative constraints (“Do not use bullet points”, “Avoid passive voice”) trim the output distribution as powerfully as affirmative direction.

ControlOutput Format Anchoring

Specify structure explicitly — JSON schema, Markdown headers, numbered lists. Showing a template skeleton the model must fill removes ambiguity about desired structure entirely.

StructureTemperature & Sampling

Tune temperature for creativity vs. determinism. Low values (0.1–0.3) for factual tasks; higher (0.7–1.0) for ideation. Combine with top-p for fine-grained diversity control.

ParameterDecomposition Prompting

Break complex tasks into sequential sub-prompts. Each intermediate output feeds the next, distributing cognitive load across a chain rather than demanding everything in one shot.

ArchitectureSelf-Critique Loops

Ask the model to evaluate its own output against a rubric, then revise. A two-pass approach — generate, then critique-and-improve — consistently yields more polished results.

RefinementAudience Targeting

Specify the reader’s expertise level and purpose. “Explain to a non-technical CFO” produces fundamentally different output than “Explain to a senior ML engineer” for the same topic.

Calibration“A prompt is not a command — it is a contract. The more precisely you define its terms, the more faithfully the model fulfils its obligations.”

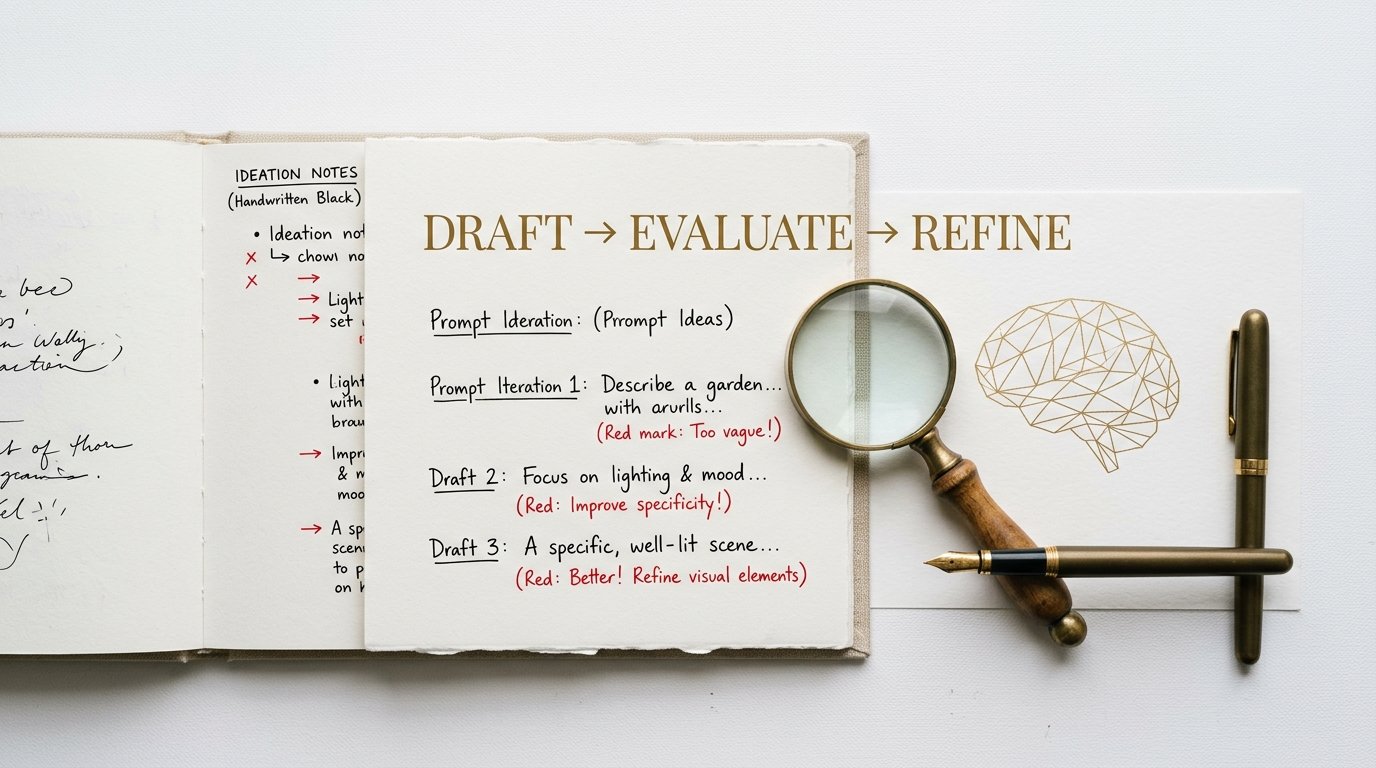

Prompt Engineering PrincipleDraft

Write an initial prompt. Prioritise clarity of intent over completeness. Ship imperfect early.

Evaluate

Run multiple samples. Identify failure modes: hallucinations, format drift, tone mismatch.

Hypothesize

Form a theory about why failures occur. One variable changes per iteration.

Modify

Apply a targeted edit — add an example, tighten a constraint, reorder sections.

Compare

A/B test the old and new prompt across identical inputs. Measure the delta objectively.

Commit

Version and document the winning prompt. Note what changed and why it helped.

Summarise the article below in exactly 3 bullet points, each under 20 words.

Lead each bullet with the business implication, not the finding.

Do not use jargon or passive voice.

— Accepts a file path or file-like object

— Returns a list of dicts with snake_case keys

— Raises descriptive ValueError on malformed rows

— Includes type hints and a docstring with an example

Do not use pandas. Use only stdlib modules.

One Variable at a Time

Change a single element per iteration or you cannot attribute improvement to any cause.

Be Positively Specific

Describe what you want, not just what you don’t. Vague constraints create vague outputs.

Version Everything

Treat prompts like code. Maintain a changelog and never overwrite a working version.

Test at Scale

One good output is anecdote. Run 10–50 samples to confirm a prompt reliably performs.

Decompose Complexity

A single 2,000-token mega-prompt rarely beats a disciplined chain of focused sub-prompts.

Show, Don’t Just Tell

An example is worth a paragraph of description. Include at least one worked demonstration.