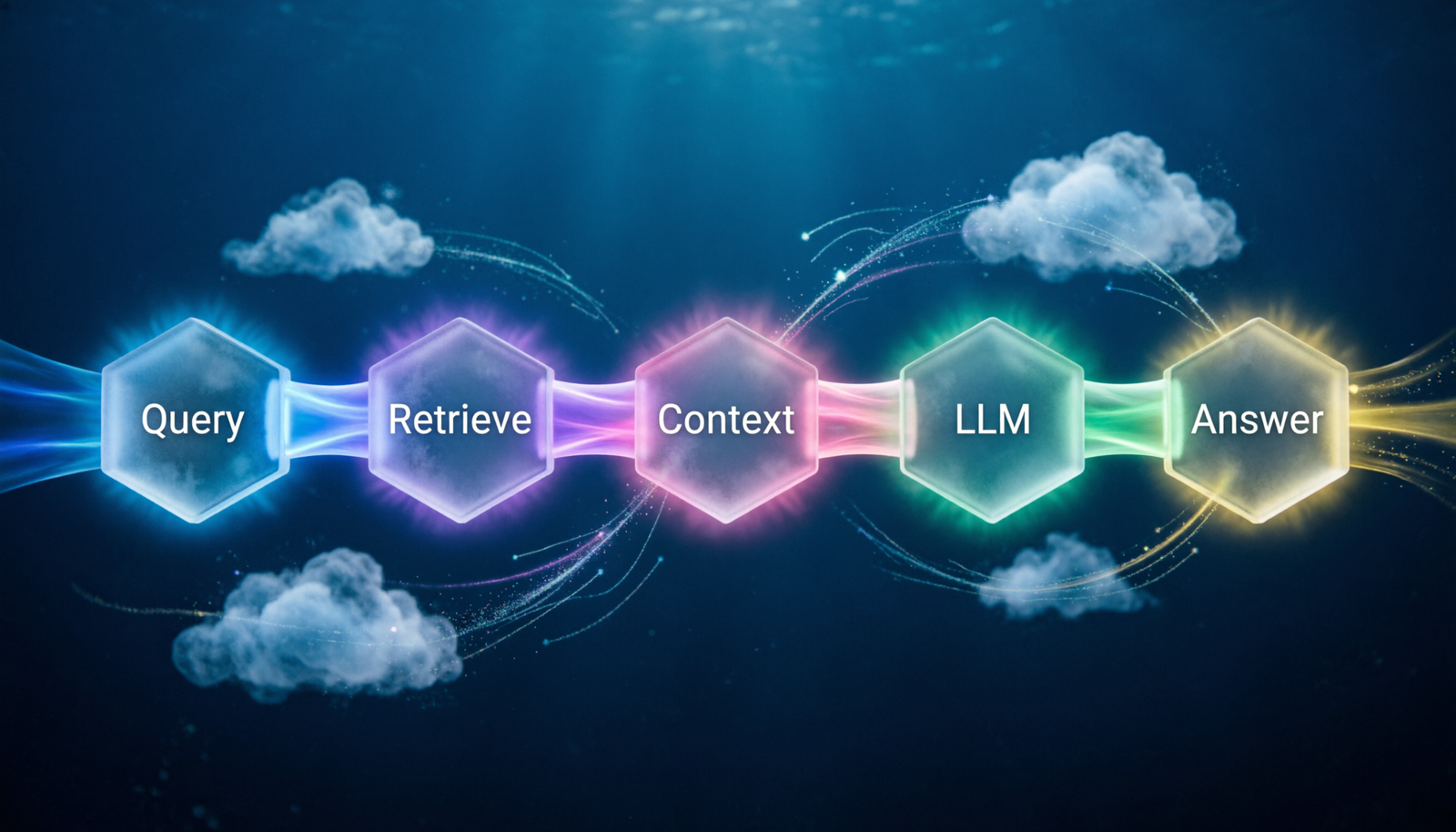

Retrieval-Augmented

Generation

A technique that grounds large language models in real, up-to-date knowledge — by fetching relevant documents before generating a response.

Vector Store

Documents are chunked and embedded into high-dimensional vectors. A similarity search finds the chunks most semantically relevant to the user’s query.

Embedding Model

Converts text into dense numerical representations. Similar meanings cluster nearby in vector space — enabling fuzzy, semantic matching beyond keywords.

Augmented Prompt

Retrieved chunks are injected into the prompt as grounding context. The LLM synthesizes this external knowledge with its parametric training.

Chunking Strategy

How you split documents matters enormously. Fixed-size, sentence-aware, and recursive character splitting each suit different document types.

Top-K Retrieval

Only the K most relevant chunks (typically 3–10) are passed to the model, balancing context richness against the LLM’s context window size.

Hallucination Guard

Because the model must stay grounded in retrieved text, RAG dramatically reduces confident but fabricated answers — a key reliability benefit.

RAG vs Fine-Tuning vs Prompting

| Dimension | Prompting Only | Fine-Tuning | RAG |

|---|---|---|---|

| Knowledge freshness | Static | Re-train needed | Live / updatable |

| Setup cost | Near zero | High (GPU time) | Moderate |

| Factual grounding | Low | Medium | High |

| Citable sources | No | No | Yes |

| Custom style/tone | Partial | Strong | Partial |

| Scales to large corpus | No | Tricky | Yes |

Minimal Python Example

# Minimal RAG pipeline with LangChain + FAISS

from langchain.embeddings import OpenAIEmbeddings

from langchain.vectorstores import FAISS

from langchain.chat_models import ChatOpenAI

from langchain.chains import RetrievalQA

# 1. Embed & index your documents

embeddings = OpenAIEmbeddings()

vectorstore = FAISS.from_documents(docs, embeddings)

# 2. Build retriever (top-4 chunks)

retriever = vectorstore.as_retriever(

search_kwargs={"k": 4}

)

# 3. Attach LLM and run

qa_chain = RetrievalQA.from_chain_type(

llm=ChatOpenAI(model="gpt-4o"),

retriever=retriever,

return_source_documents=True,

)

result = qa_chain.invoke({"query": "What is our refund policy?"})

print(result["result"])

# → Grounded answer with source citations ✨Common Use Cases

Enterprise Q&AAsk questions over internal wikis, policies, or Confluence pages.

Legal ResearchSurface relevant case law and statute excerpts instantly.

Customer SupportGround chatbots in live product docs and FAQs.

Medical LiteratureQuery PubMed abstracts with source citations.

Code SearchRetrieve relevant functions before generating code.

News SummarizationCondense recent articles beyond the model’s cutoff.