Advanced Prompt

Engineering for

Agentic Reasoning

Master the architecture of thought — designing prompts that don’t just instruct, but orchestrate multi-step reasoning, tool use, and autonomous decision-making.

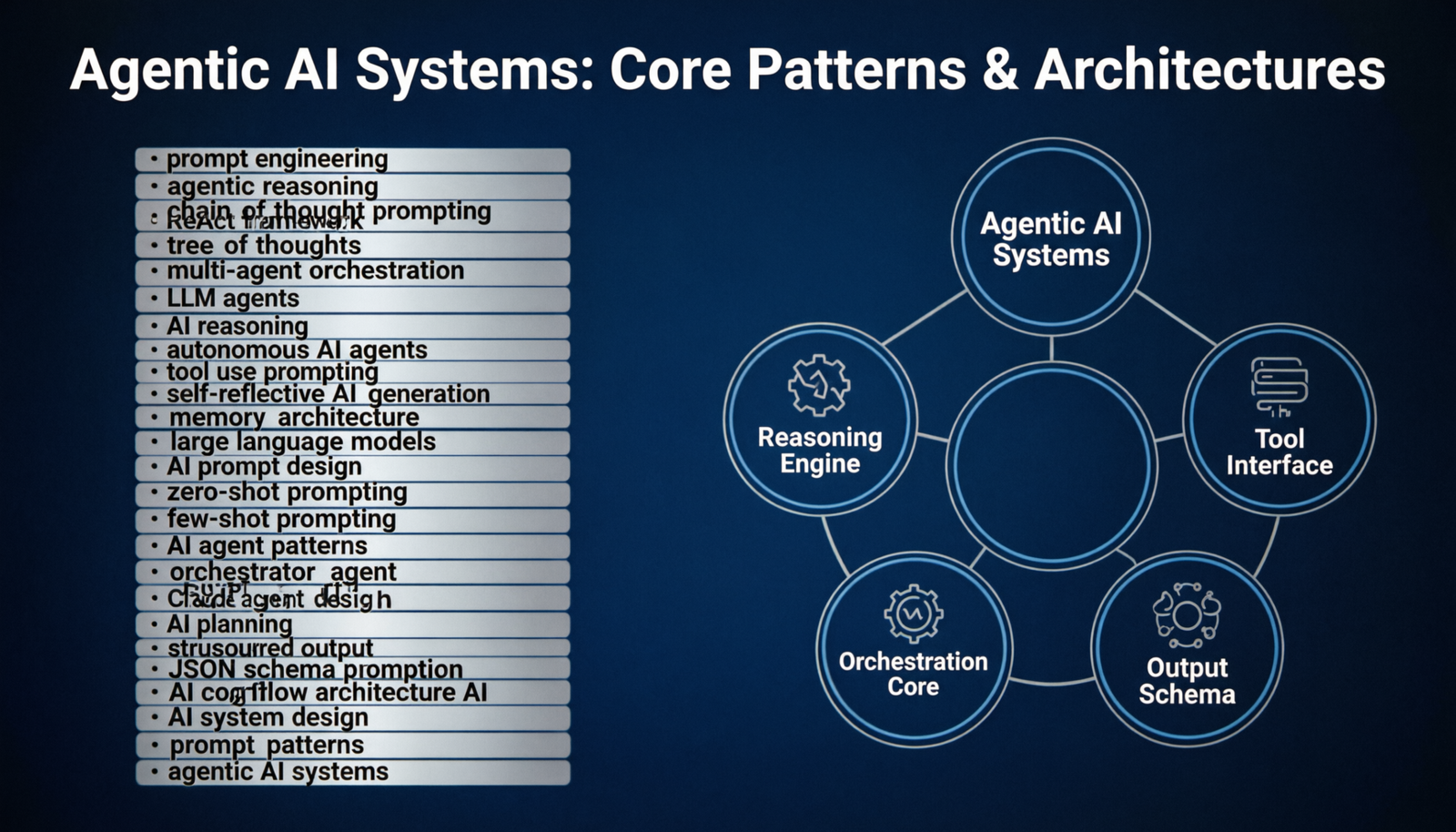

What is Agentic Reasoning?

Agentic reasoning is the capacity of a language model to decompose ambiguous goals into executable sub-tasks, maintain working memory across iterations, invoke external tools, evaluate intermediate results, and self-correct — all within a structured prompt architecture.

Six Pillars of Agentic Prompting

The ReAct Prompt Pattern

A canonical ReAct system prompt structures the model’s cognitive loop as an explicit, parseable trace — enabling reliable tool dispatch and graceful error recovery.

# ── Agent Identity ────────────────────────────────── You are a reasoning agent with access to external tools. Solve the user's task using the following loop: THOUGHT: // Articulate your current understanding // and what sub-task to tackle next. ACTION: // Invoke exactly one tool per step. tool_name(param_a="value", param_b=42) OBSERVE: // The tool result is injected here automatically. # Repeat THOUGHT → ACTION → OBSERVE until resolved. # When confident, emit: ANSWER: // Your final, grounded response to the user. # ── Constraints ───────────────────────────────────── - Never fabricate tool results. - Always cite the OBSERVE block that supports your ANSWER. - Maximum 12 reasoning steps before requesting clarification.

Choosing the Right Technique

Not every problem demands the same cognitive architecture. Use this reference to match task properties to optimal prompting strategies.

| Task Type | Complexity | Recommended Technique | Key Prompt Element |

|---|---|---|---|

| Math / Logic | Medium | Zero-shot CoT | “Think step by step” |

| Research + Synthesis | High | ReAct + Tool Use | THOUGHT / ACTION / OBSERVE |

| Creative Writing | Low–Med | Few-shot Exemplars | 3–5 style demonstrations |

| Code Generation | High | CoT + Self-Critique | Inline test assertions |

| Long-Horizon Planning | Very High | Tree of Thoughts | Branch scoring + pruning |

| Structured Extraction | Low | Constrained Generation | JSON schema in system prompt |

| Multi-Agent Delegation | Very High | Orchestrator + Subagents | Role + capability manifests |

Multi-Agent Orchestration

The frontier of agentic prompting involves composing specialized sub-agents under a central orchestrator. Each agent receives a narrow, well-defined system prompt — maximizing coherence and minimizing context pollution.

# Orchestrator dispatches to specialist agents ORCHESTRATOR_PROMPT = """ You coordinate a team of specialist agents. Break the task into sub-problems and delegate: researcher → web search, fact retrieval analyst → data interpretation, reasoning writer → synthesis, structured output critic → quality review, error detection Emit delegation commands as JSON: { "delegate_to": "researcher", "task": "Find Q3 2024 revenue figures for NVDA", "output_format": "{ value: number, source: string }" } """ # Each specialist agent is a separate API call # with its own focused system prompt + task payload