Evaluating Model

Performance & Benchmarks

Benchmark evaluation is no longer a peripheral concern — it is the principal lens through which the research community interrogates model capability, reliability, and alignment. As frontier models grow more capable, the limitations of static leaderboards become more acute.

This digest surveys the essential metrics, their mathematical underpinnings, and the evolving landscape of multi-dimensional evaluation suites used to characterise language model behaviour.

“A benchmark not designed to fail is not a benchmark — it is flattery.”

What We Measure & Why It Matters

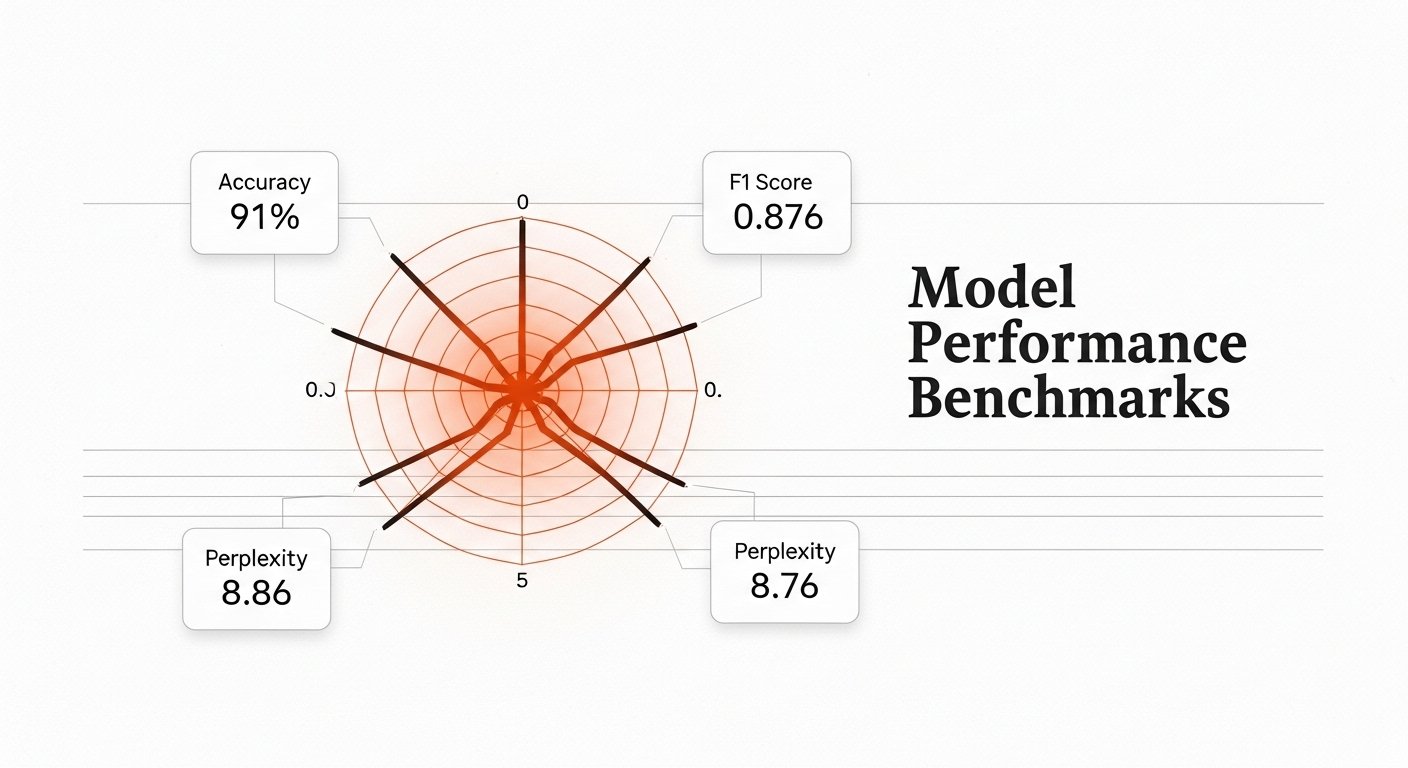

Each metric probes a distinct facet of model quality. Taken together, they paint a multidimensional portrait of a system’s strengths and failure modes.

Standard Evaluation Suites

| Benchmark | Domain | Metric | Baseline | SOTA | Human | Status |

|---|---|---|---|---|---|---|

| MMLU | Knowledge | Accuracy | 56.3% | 91.7% | 89.8% | Saturated |

| HumanEval | Coding | Pass@1 | 28.8% | 90.2% | 94.0% | Near parity |

| GSM8K | Math Reasoning | Accuracy | 35.1% | 97.0% | 98.3% | Saturated |

| MATH | Adv. Mathematics | Accuracy | 6.9% | 84.3% | 90.0% | Active |

| BIG-Bench Hard | Reasoning | Accuracy | 17.3% | 83.1% | ~85% | Active |

| TruthfulQA | Factuality | MC Accuracy | 58.1% | 71.4% | 94.0% | Open |

| HellaSwag | Common Sense | Accuracy | 70.6% | 95.3% | 95.6% | Saturated |

| GPQA Diamond | Expert Science | Accuracy | 30.0% | 74.4% | 69.7% | Open |

| SWE-bench | Software Eng. | Resolve % | 1.9% | 54.6% | — | Open |

| MT-Bench | Instruction | Score /10 | 6.0 | 9.2 | — | Active |

Multi-dimensional Capability Radar

The Saturation Problem

When a model reaches human-level performance on a benchmark, the benchmark ceases to discriminate. The field responds with ever-harder evaluations — GPQA Diamond, FrontierMath, SWE-bench Verified — while also shifting toward human-preference evaluations (Chatbot Arena, LMSYS) that resist straightforward gaming.