Hybrid Search Strategies for

RAG-Augmented Agents

Beyond simple vector similarity — combining dense embeddings, sparse BM25 retrieval, and learned reranking to build agents that find what they actually need.

Why Pure Vector Search Falls Short

Dense retrieval encodes semantics beautifully but struggles with rare tokens, exact identifiers, and domain jargon. A user querying CVE-2024-3094 or a product SKU doesn’t want the “semantically nearest” document — they want an exact match. Conversely, BM25 misses paraphrase and conceptual synonymy entirely.

Real-world RAG agents must serve both modes simultaneously. Hybrid search is the architectural answer.

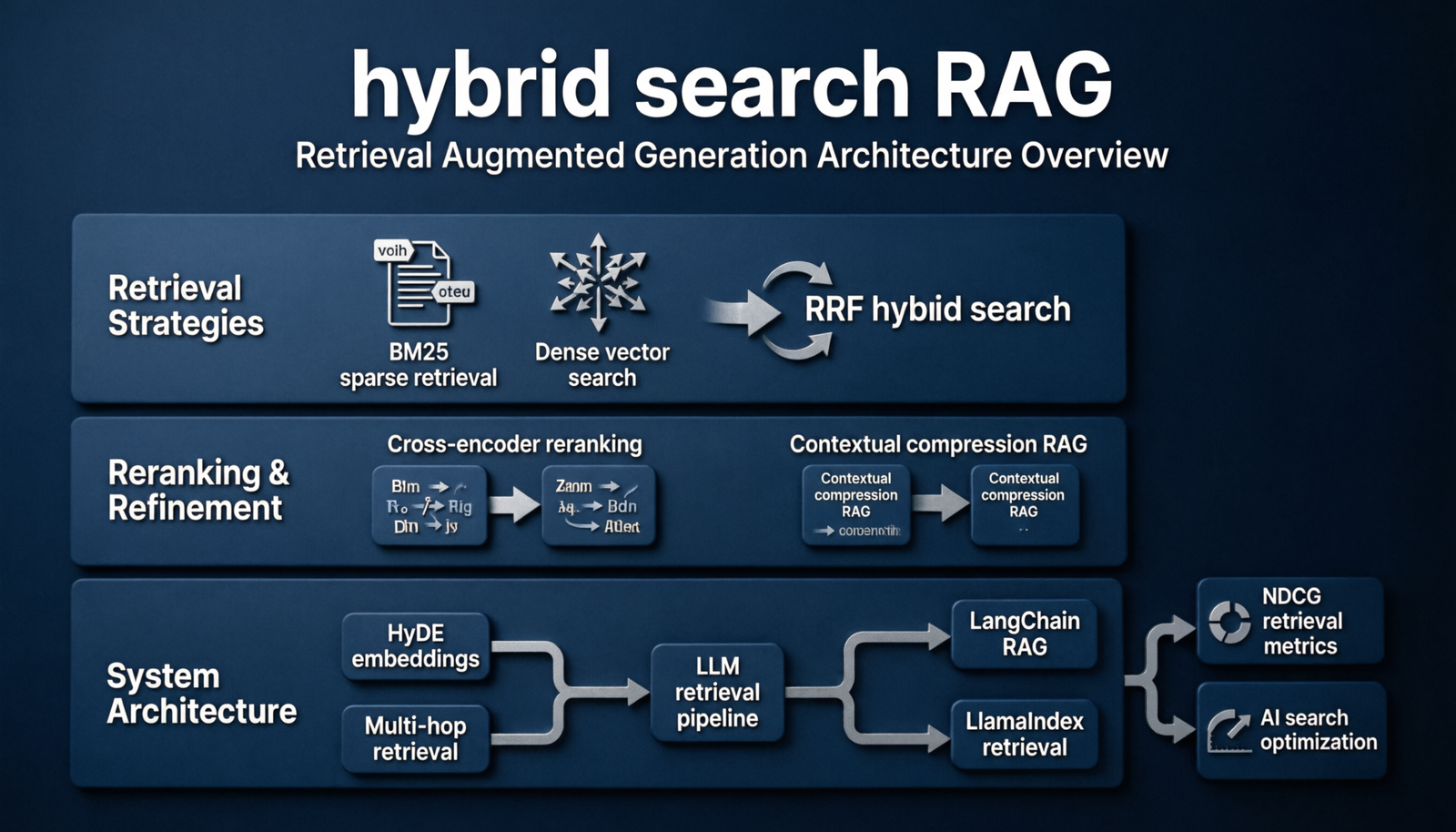

The Hybrid Retrieval Pipeline

At query time, two independent retrievers run in parallel. Their ranked lists are then fused before passing context to the generator.

The BM25 index and ANN index run concurrently and independently — never sequentially. Parallel fan-out keeps p99 latency below either retriever’s SLA.

Core Hybrid Search Strategies

Each strategy trades off precision, recall, latency, and implementation complexity differently. Choose by query distribution, not intuition.

Combines ranked lists without requiring score normalization. Score = Σ 1/(k + rank_i). Robust, parameter-light, and surprisingly hard to beat.

Low complexityS = α·S_dense + (1−α)·S_sparse after L2 or min-max normalization. α tunable per domain; α≈0.7 favors semantics.

TunableTrain a ranking model on retrieval features including scores, token overlap, and query type. Best NDCG but requires labeled data.

High performanceClassify queries as keyword-heavy vs. semantic, then dispatch to the appropriate retriever or blend ratio. Zero fusion latency for clear-cut queries.

EfficientAgent retrieves → LLM drafts sub-questions → re-retrieves until coverage threshold met. High recall; suited for multi-hop reasoning tasks.

Multi-hopApply structured pre-filters (date, source, entity) before hybrid retrieval to reduce candidate set and improve precision in large corpora.

ScalableScore Fusion in Practice

RRF is the workhorse default — zero score calibration required, naturally handles different retriever cardinalities, and degrades gracefully when one arm misses.

def rrf_fuse(dense_hits, sparse_hits, k=60):

scores = {}

# Process each retriever’s ranked list

for rank, doc in enumerate(dense_hits, 1):

scores[doc.id] = scores.get(doc.id, 0) + 1 / (k + rank)

for rank, doc in enumerate(sparse_hits, 1):

scores[doc.id] = scores.get(doc.id, 0) + 1 / (k + rank)

# Return merged list sorted by fused score ↓

return sorted(scores.items(), key=lambda x: x[1], reverse=True)

For production, pre-normalize BM25 scores with a sigmoid or quantile transform before weighted fusion to prevent BM25’s unbounded range from dominating.

Strategy Comparison

Cross-Encoder Reranking

Hybrid retrieval produces a candidate pool (typically top-50 to top-200). A cross-encoder reranker then scores each (query, document) pair jointly — capturing deep query-document interaction that bi-encoders miss.

Reranking top-50 with ms-marco-MiniLM-L6 adds ~40–80ms on modern GPU. For latency-critical paths, rerank top-20 only and accept a small precision trade-off.

For agentic RAG, consider adaptive reranking: run the LLM on top-3 retrieved docs; if confidence is low, trigger reranker on the full top-50. This keeps median latency near retrieval-only while maximising quality at the tail.

Advanced Agent-Specific Patterns

Generate a hypothetical answer to the query, embed it, and use that vector for dense retrieval. Dramatically improves cold-query recall — especially for knowledge-intensive reasoning tasks.

Ask the agent to reformulate a specific question into a broader “step-back” question, retrieve for both, then merge. Captures background knowledge the original query would miss.

After retrieval, pass each chunk through a compression LLM to extract only the sentences relevant to the query. Reduces context window usage by 40–60% without recall loss.

Index fine-grained child chunks for retrieval precision, but return the parent chunk for context richness. Avoids the precision-context trade-off in fixed-size chunking.

Building the Right Retrieval Stack

Start with RRF hybrid search as your baseline — it’s robust, requires no score normalization, and routinely outperforms either retriever alone by double-digit NDCG points. Add a lightweight cross-encoder reranker (top-20 or top-50) before passing context to the LLM.

Invest in query analysis early: categorising queries by type (entity lookup, semantic, multi-hop, temporal) lets you dynamically tune your retrieval blend and avoid the one-size-fits-all trap.

Finally, measure what matters for your agent: faithfulness and groundedness, not just retrieval recall. The best retrieval stack is the one that makes your generator produce fewer hallucinations on your task distribution.

If you can only do one thing: add BM25 to your vector store. That single change will improve RAG quality more reliably than any prompt engineering trick.