The Art of Inference

& Integration

A practitioner’s compendium on running AI models in production — from token generation mechanics to multi-service orchestration workflows.

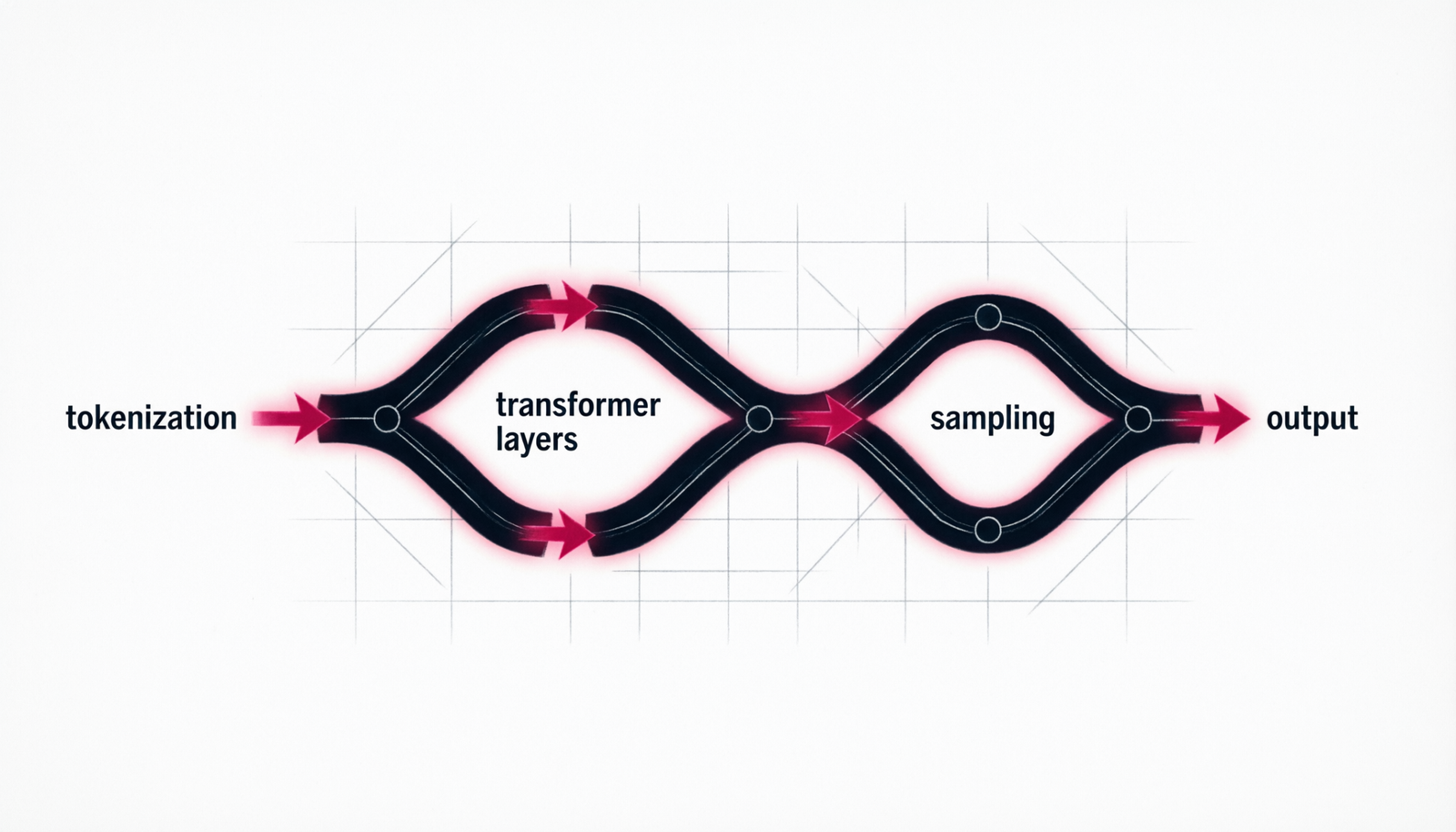

From Weights to Words — The Inference Pipeline

Inference is the act of running a trained neural network forward to produce outputs — predictions, text, images, embeddings. Unlike training (which updates billions of parameters), inference is read-only: the model weights are frozen and you’re simply computing a forward pass.

For large language models, inference means autoregressive token generation: the model predicts the next token, appends it to the context, and repeats until it hits a stop sequence or the maximum token limit.

“Inference is the moment where capability meets reality — where a trained model’s statistical knowledge becomes a concrete, useful output.”Core ML Systems Principle

Every response you receive from an LLM API is the result of hundreds to thousands of individual forward passes through transformer layers — each one attending over the entire context window.

Tuning the Knobs That Shape Output

Every inference call accepts parameters that steer sampling behaviour. Mastering these is the difference between a model that rambles and one that hits the mark.

| Parameter | Range | Effect |

|---|---|---|

| temperature | 0.0 – 2.0 | Randomness of sampling. 0 = deterministic greedy. 1 = model default. >1 = creative chaos. |

| top_p | 0.0 – 1.0 | Nucleus sampling. Only sample from the top-p probability mass. Tightens vocabulary without losing diversity. |

| max_tokens | 1 – context | Hard upper limit on output tokens. Controls cost and latency. |

| stop_sequences | string[] | Generation halts when any of these strings appear. Useful for structured output. |

| stream | bool | If true, returns tokens progressively as SSE events rather than waiting for full completion. |

| system | string | Sets persistent persona, task framing, and constraints for the entire conversation. |

// Minimal Anthropic API call const response = await fetch( "https://api.anthropic.com/v1/messages", { method: "POST", headers: { "x-api-key": process.env.ANTHROPIC_API_KEY, "anthropic-version": "2023-06-01", "content-type": "application/json", }, body: JSON.stringify({ model: "claude-sonnet-4-20250514", max_tokens: 1024, temperature: 0.7, system: "You are a senior engineer.", messages: [ { role: "user", content: "Explain KV caching." } ], }), } ); const data = await response.json(); console.log(data.content[0].text);

↑ Every call is stateless. The full conversation history must be sent with each request.

Six Patterns Every Builder Must Know

Integrating LLM inference into real products requires well-established architectural patterns. Each solves a distinct class of problem.

Simple Completion

Single-turn prompt → response. Stateless. Best for classification, extraction, summarisation, and one-shot transformations. Zero state management overhead.

Conversational Loop

Maintain a messages array and append each turn. The API has no memory — you own the history. Use sliding-window truncation at context limits.

RAG Pipeline

Retrieval-Augmented Generation: embed the query, fetch semantically similar chunks from a vector store, then inject them as context before calling the model.

Tool Use / Function Calling

Define tools as JSON schemas. The model decides when to call them; you execute them and return results. Enables grounding in live data and real-world actions.

Agentic Loop

Model reasons, selects a tool, receives the result, reasons again — repeatedly. The loop continues until the model emits a final answer. Power with caution.

Batch / Async Processing

Queue inference tasks and process them offline. Anthropic’s Batch API offers up to 50% cost reduction for workloads that tolerate 24-hour turnaround.

Make It Feel Instant

Streaming via Server-Sent Events (SSE) delivers tokens to the client as they are generated, dramatically reducing perceived latency. Time-to-first-token (TTFT) matters far more than total generation time for user experience.

- Set

stream: trueon your API request - Read chunks as

content_block_deltaevents - Accumulate

delta.textinto your display buffer - Listen for

message_stopto finalise - Propagate streaming to your frontend via WebSocket or SSE relay

- Show a blinking cursor to signal active generation

// Streaming with the Anthropic SDK import Anthropic from "@anthropic-ai/sdk"; const client = new Anthropic(); const stream = client.messages.stream({ model: "claude-sonnet-4-20250514", max_tokens: 1024, messages: [ { role: "user", content: "Write a haiku about latency." } ], }); for await (const chunk of stream) { if (chunk.type === "content_block_delta") { process.stdout.write(chunk.delta.text); } } const final = await stream.finalMessage(); // final.usage → { input_tokens, output_tokens }

Choosing the Right Architecture

Break complex tasks into sequential LLM calls, passing outputs as inputs. Each step is focused and verifiable. Ideal for document analysis → extraction → formatting pipelines.

Fire multiple independent inference calls concurrently with Promise.all(). Dramatically reduces wall-clock time for multi-faceted analysis. Aggregate with a final synthesis call.

A lightweight classifier (or small LLM) routes incoming requests to specialised models or prompts. Reduces cost by avoiding over-provisioned model usage for simple tasks.

Wrap inference between an input safety check and an output validation call. The inner model generates; the outer calls verify adherence to constraints, tone, or schema.

Embed incoming prompts and compare cosine similarity against a cache of past (prompt, response) pairs. Serve cache hits instantly — especially valuable for FAQ-style queries.

Model Context Protocol lets the model invoke external services (calendars, databases, APIs) through a standardised tool interface. Build agentic applications with real-world reach.

Before You Ship

Moving from prototype to production requires discipline across reliability, cost, observability, and safety. Here is the non-negotiable checklist.

- Exponential back-off + jitter on rate-limit errors (429)

- Token budget enforcement before each call

- Prompt version control (treat prompts as code)

- Input/output logging with PII redaction

- Latency and cost dashboards (TTFT, total tokens, USD/request)

- Automated evals on a golden dataset after every prompt change

- Fallback to a smaller model on timeout

- Output schema validation (JSON mode or regex guard)

- Context window headroom monitoring

- User-facing error messages that never leak prompt content

Latency Budget Breakdown

| Stage | Typical Cost | Optimise? |

|---|---|---|

| Network RTT | 20 – 80 ms | Edge deployment |

| Tokenisation | < 5 ms | Negligible |

| Prompt processing | 100 – 500 ms | Reduce input tokens |

| KV Cache hit | Saves 60–80% | Prefix-reuse |

| Token generation | 10–30 ms/tok | Limit max_tokens |

| Streaming delivery | Progressive | Always stream |

Inference is the product.

Integration is the craft.

Understanding the mechanics of token generation, streaming, and architectural patterns separates engineers who use AI from those who build with it.