Infrastructure Brief · GenAI 2025

Building Cost-Efficient

Scaling Strategies

for GenAI

GenAI infrastructure costs can spiral 10× faster than traditional ML workloads. This guide distills the engineering and financial strategies that keep frontier AI systems performant, scalable, and economically sustainable at any scale.

70%

Avg. Cost Reduction via Quantisation

4×

Throughput Gain with Batching

$0.5M

Avg. Monthly Spend at 1B Tokens/day

Core Strategies

Scaling Without

Burning Budget

Each strategy tier targets a different layer of the infrastructure stack. Apply them in sequence — starting with the highest-leverage, lowest-effort interventions first.

Request Batching & Caching

Aggregate concurrent inference calls and serve identical or semantically similar requests from a shared cache layer. Semantic caching alone eliminates 30–60% of redundant compute for prompt-heavy workloads.

60%

Cost Reduction

Model Quantisation & Distillation

Compress large models to INT4/INT8 precision or distil capabilities into smaller task-specific models. QLoRA fine-tuning enables deploying sub-7B models with near-frontier accuracy for narrow use cases.

70%

VRAM Savings

Autoscaling & Spot Instance Arbitrage

Deploy on heterogeneous compute clusters combining on-demand and spot/preemptible GPUs with intelligent workload routing. Stateless inference workloads tolerate interruptions well, enabling 60–80% spot utilisation safely.

65%

Compute Cost

Multi-Model Routing & Cascade Inference

Route queries by complexity using a lightweight classifier. Simple requests hit a small, cheap model; complex queries escalate to frontier models. Cascade architectures cut frontier model invocations by up to 80% in mixed workloads.

80%

Frontier Calls

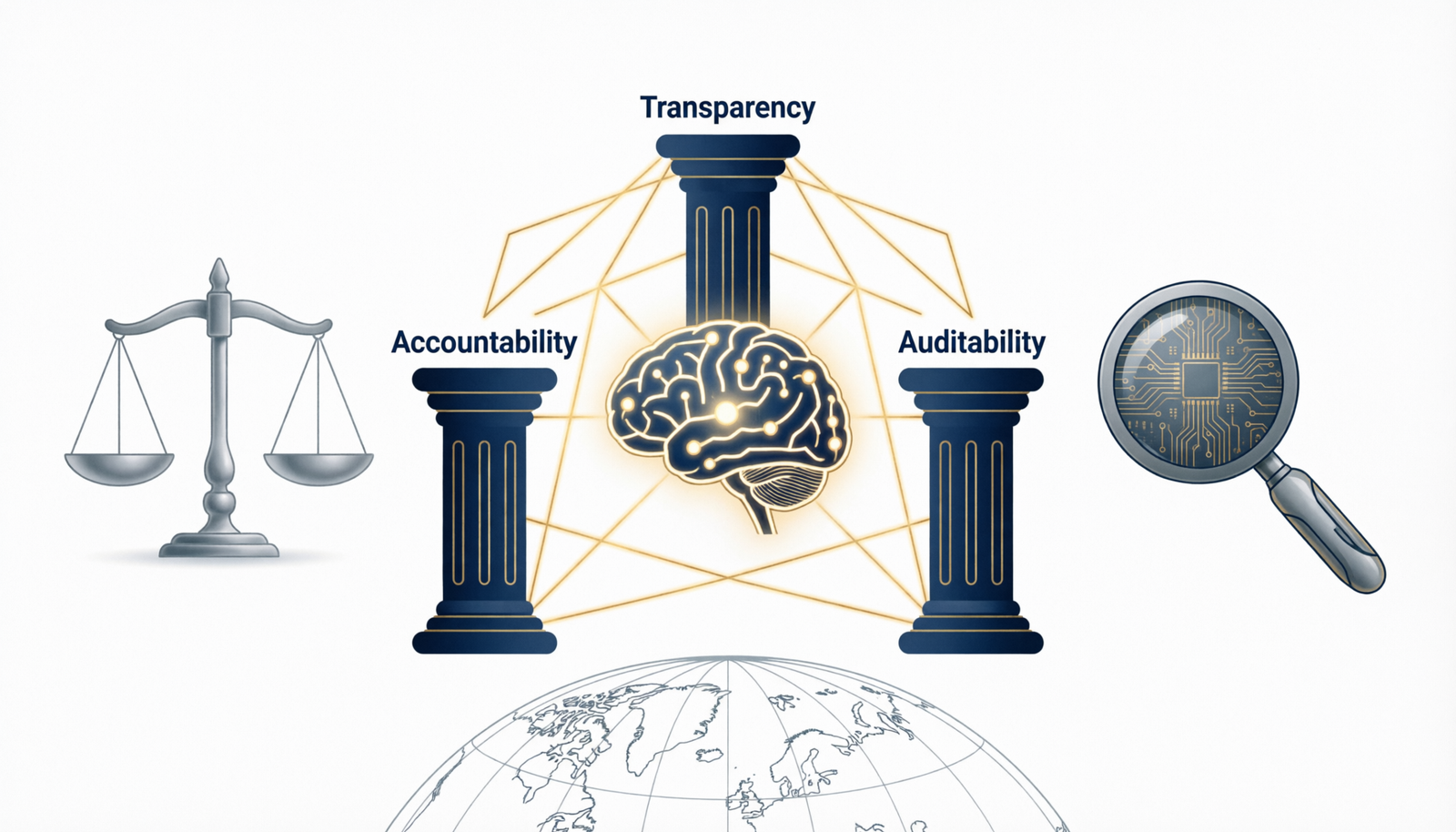

Infrastructure Pillars

Four Engineering

Pillars

Sustainable GenAI scaling rests on four interconnected engineering disciplines. Weakness in any one pillar becomes the bottleneck that limits the others.

⚡

Compute Optimisation

Maximise GPU utilisation through tensor parallelism, pipeline parallelism, and continuous batching. Idle GPU cycles are the single largest source of wasted GenAI spend.

- Flash Attention 2 for memory efficiency

- PagedAttention for KV cache management

- Continuous batching via vLLM / TGI

- Tensor & pipeline parallelism tuning

🗄️

Storage & Memory Architecture

Architect KV cache, model weight loading, and embedding storage for throughput. Memory bandwidth is the silent bottleneck in long-context workloads.

- Disaggregated KV cache with Mooncake

- HBM vs. GDDR tiering decisions

- Vector DB sharding for RAG pipelines

- Speculative decoding to cut latency

🔀

Traffic & Load Management

Build routing layers that handle burst traffic, enforce SLA-tier differentiation, and gracefully degrade under capacity pressure without cascading failures.

- Priority queuing by SLA tier

- Adaptive rate limiting per tenant

- Backpressure signalling across services

- Multi-region failover & geo-routing

📊

Observability & FinOps

Instrument every inference endpoint with token-level cost attribution. Without per-request cost visibility, optimisation is guesswork and overspend is invisible until the bill arrives.

- Token-level cost attribution per feature

- GPU utilisation heatmaps (DCGM)

- Automated rightsizing recommendations

- Budget anomaly alerting pipelines

Reference Architecture

for Cost-Optimal GenAI

A production-grade GenAI serving stack requires careful layering of caching, routing, inference, and monitoring. Each layer compounds the cost efficiency of the others.

LAYER 01

API Gateway & Auth

Rate limiting, tenant auth, SLA classification, and semantic cache lookup before any compute is allocated.

LAYER 02

Intelligent Router

Complexity classifier dispatches requests to nano, small, or large model tiers. Prompt templates routed to fine-tuned adapters.

LAYER 03

Inference Fleet

Heterogeneous GPU cluster running vLLM / TGI with autoscaling. Spot instances handle batch; on-demand covers latency-sensitive SLAs.

LAYER 04

FinOps Telemetry

Real-time cost attribution, token counters, utilisation metrics streamed to dashboards with automated budget-breach alerts.

Cost Analysis

Where the Money

Actually Goes

Understanding cost distribution is prerequisite to optimising it. In a typical production GenAI deployment at scale, compute dominates — but storage and egress are fast-growing surprises.

GPU Compute (Inference)

~58%

Network Egress & CDN

~13%

Training & Fine-tuning

~11%

Operational Playbook

Day-Two Operations

for Scaling Teams

Deploying at scale is one milestone. Sustaining cost efficiency as traffic, models, and team size grow is the ongoing engineering discipline that separates GenAI winners from burnout teams.

OPS-01

Daily

Token Budget Review

Review per-feature and per-tenant token consumption daily. Anomalies above 15% baseline variance trigger automated investigation. Establish token budgets per product feature enforced at the gateway layer.

OPS-02

Weekly

GPU Utilisation Audit

Pull DCGM utilisation heatmaps weekly. Any instance class averaging below 60% utilisation is a rightsizing candidate. Correlate with P99 latency to ensure compression isn’t degrading SLAs.

OPS-03

Monthly

Model Cascade Rebalancing

Evaluate routing classifier accuracy monthly. As smaller fine-tuned models improve, rebalance the complexity thresholds to route more volume to cheaper tiers without quality regression.

OPS-04

Quarterly

Reserved Capacity Optimisation

Negotiate reserved or committed-use GPU contracts quarterly based on rolling 90-day baseline consumption. Match contract duration to model lifecycle — avoid locking capacity to models you’ll replace within 12 months.

OPS-05

Ongoing

Prompt Engineering for Cost

Treat token count as an engineering constraint. Measure average prompt+completion token length per endpoint. Structured output formats, few-shot compression, and system prompt caching (e.g. Anthropic prompt caching) routinely cut token spend by 30–50%.