Bestseller #2

Bestseller #2

Master AI & Machine Learning — Expert Guides & Books for Developers

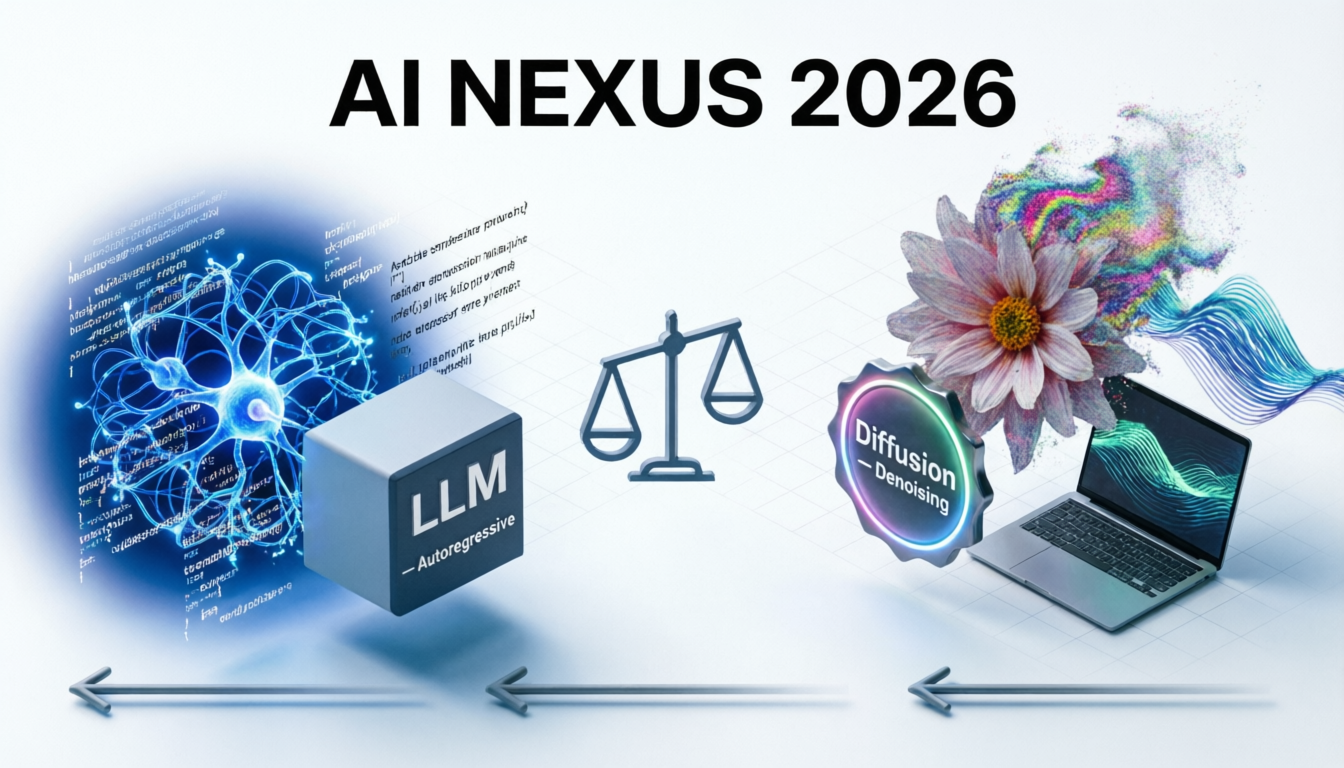

Autoregressive Transformers · Next-token prediction · In-context learning

Denoising Probabilistic Models · Latent diffusion · High-fidelity synthesis

Multi-head self-attention (causal masking), feedforward networks, layer norm & residuals. Trained on trillion+ tokens, context scaling to 2M tokens via RoPE & sliding window attention. Enables in-context learning and emergent reasoning.

Forward process adds Gaussian noise gradually; reverse process learns to denoise with neural net (U-Net/DiT). Latent diffusion reduces compute in compressed space. Modern flow matching & consistency models speed up sampling.

LLMs: cross-entropy on next token (causal LM) + reinforcement learning. Diffusion: MSE noise prediction (ε-prediction) or v-prediction. Both rely on massive datasets and scaling laws.

LLMs: autoregressive decoding with KV caching, speculative decoding. Diffusion: iterative denoising (DDIM, DPM-Solver) with 20-50 steps, recently 1-4 step distilled models.

| Attribute | LLMs | Diffusion Models |

|---|---|---|

| Primary Modality | Text, code, structured reasoning | Images, video, 3D, audio |

| Generation Quality | Coherent, logical, creative text | Photorealistic, fine-grained detail |

| Controllability | Prompt engineering, logit bias, CFG sampling | Guidance scale, ControlNet, IP-Adapter, inpainting |

| Training Stability | Robust with careful lr scheduling | Moderate – requires noise schedule tuning |

| Inference Speed | Fast (KV caching, ~50-100 tok/s) | Slower (multi-step), but distilled models near real-time |

| Parameter Scale | 1B – 1.8T (sparse MoE) | 0.5B – 12B (DiT-XL/3B to 8B) |

| Recent Breakthroughs | 1M+ context, reasoning models (o1), tool use | Consistency models, 4-step inference, video diffusion |

🌟 LLM + Diffusion: The Best of Both Worlds 🌟

Modern multimodal systems combine LLMs for planning and diffusion for high-quality rendering: LLM writes complex prompts + diffusion generates visuals. Frameworks like Transfusion, MAR, and agentic workflows enable unified generative intelligence. Together they unlock real-time creative AI, science simulation, and next-gen human-AI collaboration.

MMLU: >92% · HumanEval: 89% · MATH: 78% · Arena Elo: leading models near 1300 · Long context retrieval >99% at 1M tokens.

FID on COCO30K: <2.1 · CLIP score: >0.33 · GenEval: 82% · Human preference alignment (PickScore) improved by 45%.

LLMs: FlashAttention-3, FP8 training; Diffusion: latent distillation reduces steps from 50 → 4, 6x faster inference.

LLMs for protein sequence design, drug discovery; Diffusion for molecular conformation, material generation & 3D protein folding.

💡 Quick Decision Guide

✅ Choose LLMs when you need: reasoning, code writing, analysis, conversation, structured extraction, or agentic workflow.

✅ Choose Diffusion Models for: high-quality image synthesis, artistic rendering, video generation, 3D asset creation, and realistic perceptual detail.

✅ Combine both for powerful multimodal AI (text-to-image, video generation with LLM scene description, interactive creative tools).