AI Workflow Orchestration

Orchestrating

Multi-Step

Workflows

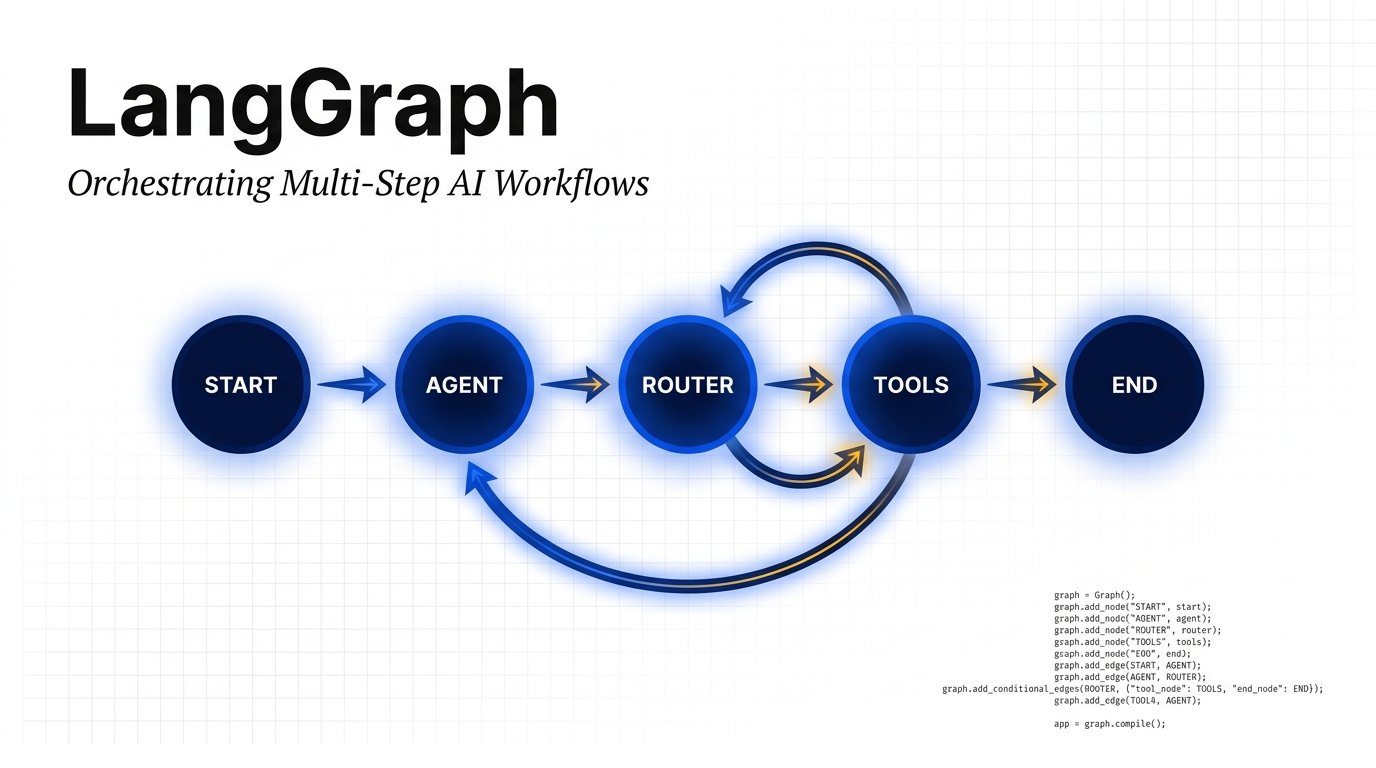

LangGraph is a framework for building stateful, multi-actor applications with LLMs. Model workflows as directed graphs — with cycles, conditions, and persistent state across steps.

Overview

What is

LangGraph?

LangGraph extends LangChain to enable the creation of complex, cyclical computation graphs. Unlike simple chain-of-thought pipelines, LangGraph lets you define workflows where LLM agents can loop, branch, and collaborate — mirroring real-world decision processes.

“Graphs are the natural model for any process that involves decisions, retries, and shared memory between agents.”

Built on the concept of a StateGraph, it maintains a persistent state object passed between every node. Conditional edges route execution dynamically, enabling complex agentic behaviors like tool use, self-reflection, and multi-agent delegation.

-

Stateful ExecutionA typed state object flows through every node, accumulating context across steps

-

Conditional RoutingEdges evaluate state at runtime to dynamically choose the next node

-

Human-in-the-LoopPause execution at any node and resume after human review or intervention

-

Multi-AgentSpawn sub-agents as nodes; orchestrate delegation between specialists

-

Streaming SupportStream tokens and intermediate node outputs in real time to your UI

-

LangGraph PlatformDeploy agents as APIs with built-in persistence, queuing, and monitoring

Architecture

Core Building Blocks

A TypedDict that carries all data across the graph. Each node reads from and writes to this shared object. Reducers control how state is merged when multiple nodes write to the same key.

TypedDict / PydanticPython functions (or Runnables) that perform a unit of work. A node receives the current state, executes logic (LLM call, tool use, branching), and returns a state update.

Callable[State, State]Connections between nodes. Normal edges always follow a fixed path. Conditional edges call a function that inspects state and returns a string key mapping to the next node.

add_conditional_edges()The StateGraph object that assembles nodes, edges, entry point, and end conditions. Calling .compile() returns a runnable that can be invoked, streamed, or persisted with a checkpointer.

StateGraph.compile()Execution Flow

A Typical

Agentic Cycle

Each invocation passes through a structured cycle: input is received, the LLM reasons over it, tools are conditionally called, and state is updated until the termination condition is satisfied.

with tools & memory

or finish?

append result

returned

The loop: After the Tools node executes, execution returns to the Agent node. The LLM sees the tool result in its message history and either calls another tool or signals completion — enabling true ReAct-style reasoning.

Implementation

Code Examples

from langgraph.graph import StateGraph, END from langchain_anthropic import ChatAnthropic # 1. Define the graph graph = StateGraph(AgentState) # 2. Add nodes graph.add_node("agent", agent_node) graph.add_node("tools", tool_node) # 3. Set entry point graph.set_entry_point("agent") # 4. Add edges graph.add_conditional_edges( "agent", should_continue, {"tools": "tools", "end": END} ) graph.add_edge("tools", "agent") # 5. Compile app = graph.compile()

import asyncio from langchain_core.messages import HumanMessage # Invoke synchronously result = app.invoke({ "messages": [ HumanMessage(content="What is 42 * 37?") ] }) # Stream intermediate steps async def run_stream(): async for event in app.astream_events( {"messages": [...]}, version="v2" ): if event["event"] == "on_chat_model_stream": chunk = event["data"]["chunk"] print(chunk.content, end="")

from typing import TypedDict, Annotated from langchain_core.messages import BaseMessage import operator class AgentState(TypedDict): # operator.add is the reducer — # messages are appended, not overwritten messages: Annotated[ list[BaseMessage], operator.add ] # Simple fields just get overwritten iteration_count: int final_answer: str | None tool_results: list[dict]

from pydantic import BaseModel, Field from langgraph.graph import add_messages class ResearchState(BaseModel): messages: Annotated[list, add_messages] query: str = "" sources: list[str] = Field(default_factory=list) draft: str = "" approved: bool = False revision_count: int = 0 # Nodes receive and return partial state def draft_node(state: ResearchState): return {"draft": llm.invoke(state.query)}

Design Patterns

Workflow Patterns

in the Wild

The agent alternates between Reasoning and Acting — calling tools, observing results, and iterating until a satisfying answer is reached.

A planner node generates a list of sub-tasks. An executor node works through each, updating state. A reviewer checks completeness.

After generating an answer, the graph routes to a critic node that evaluates quality. If unsatisfactory, execution loops back to regenerate.

Fan out to multiple nodes simultaneously using Send API — each branch works on a chunk of data, results are aggregated by a merge node.

A supervisor LLM dynamically routes work to specialist sub-agents — researcher, coder, analyst — and aggregates their outputs into a final response.

Execution pauses at a checkpoint requiring human input. Using a Checkpointer, state is persisted to disk and resumed once the human approves or corrects.

Applications

Real-World

Use Cases

An agent searches the web, fetches documents, synthesizes findings, and iteratively refines a structured report — looping until quality criteria are met.

- query_planner → web_search × N

- fetch & parse → synthesize

- critic evaluates → revise or END

Generate code, execute it in a sandbox, observe errors, and loop to fix — automatically — until tests pass or a human intervenes.

- spec_reader → code_writer

- executor → parse_error

- debugger → code_writer (loop)

Classify intent, fetch customer data, attempt automated resolution, escalate to human if needed — with full state persistence across sessions.

- intent_classifier → data_fetcher

- resolver → satisfaction_check

- escalate or resolve → END

Fan out across data sources in parallel, normalize schemas, run statistical analysis, generate insights, and produce formatted reports with citations.

- splitter → [N source agents]

- normalizer → analyzer

- report_writer → END

Comparison

LangGraph vs Alternatives

| Feature | LangGraph | LangChain Chains | CrewAI | AutoGen |

|---|---|---|---|---|

| Cyclic graphs (loops) | ✓ Native | ✗ Linear only | ✓ Yes | ✓ Yes |

| Persistent state | ✓ Checkpointers | ✗ Manual | Partial | ✓ Yes |

| Human-in-the-loop | ✓ First-class | ✗ No | Limited | ✓ Yes |

| Multi-agent | ✓ Native | ✗ Manual | ✓ Core feature | ✓ Core feature |

| Streaming | ✓ Token + events | Token only | Limited | ✓ Yes |

| Learning curve | Moderate | Low | Low | High |