Transformers Architecture Explained: Attention Mechanisms, Self-Attention & Positional Encoding 2026

Deep Learning Foundations

Transformer Architectures & Attention Mechanisms

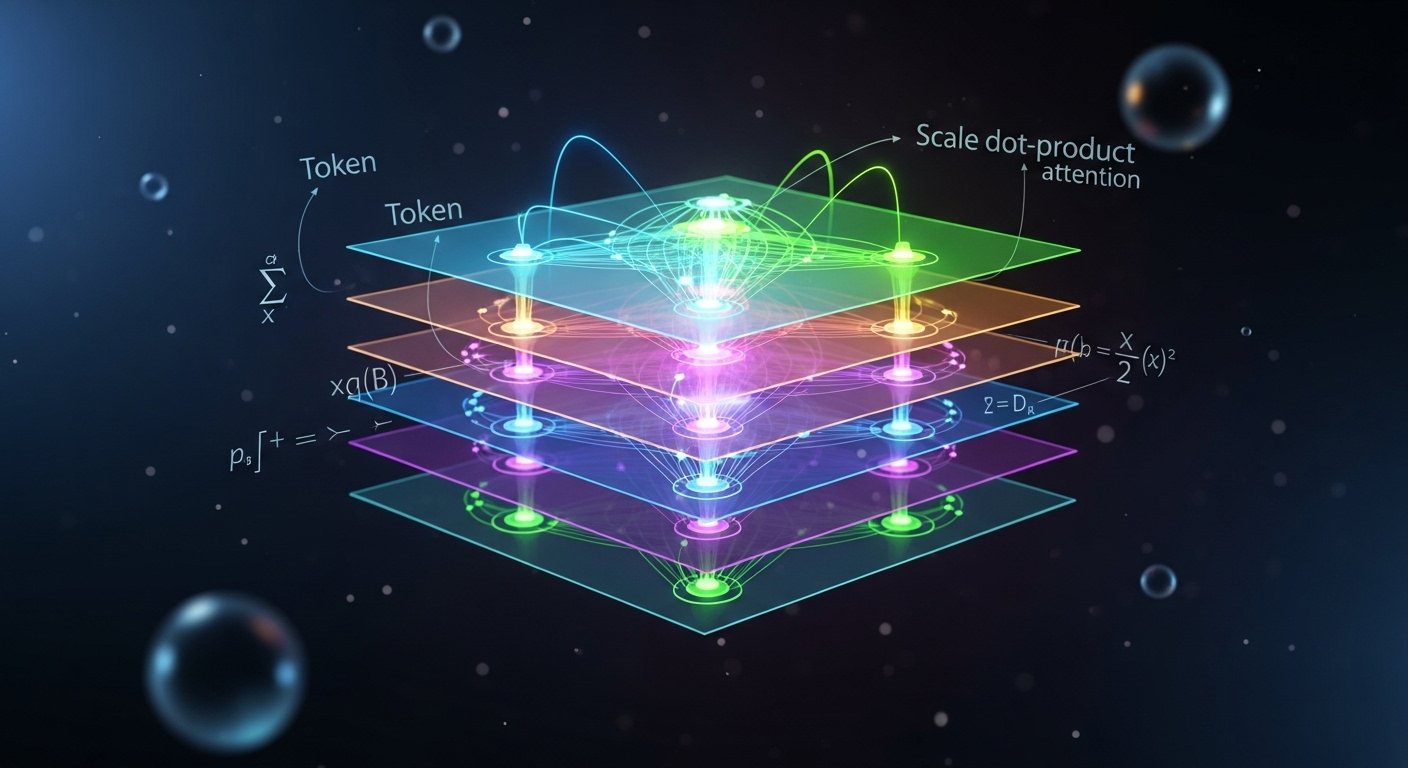

A visual guide to the architecture that reshaped modern AI — from self-attention and positional encodings to multi-head projections and feed-forward layers.

Origins

What is a Transformer?

Introduced in Attention Is All You Need (Vaswani et al., 2017), the Transformer dispensed with recurrence entirely. Instead of processing tokens sequentially like RNNs, it computes relationships between all tokens simultaneously — enabling massive parallelism and capturing long-range dependencies effortlessly.

Today, transformers power large language models, image recognition, protein folding, code generation, and virtually every state-of-the-art AI system.

Core Mechanism

Scaled Dot-Product Attention

The fundamental operation. Every token creates three vectors — a Query, a Key, and a Value — via learned linear projections. The attention score between any two tokens is computed as their Query·Key dot product, scaled to prevent vanishing gradients, then softmaxed into a probability distribution over Values.

The scaling factor √dk prevents the dot products from growing too large as the key dimension increases, which would push softmax into regions of vanishingly small gradients.

Attention weights from “sat” attending to all tokens in the sequence

Multi-Head Attention

Rather than a single attention function, the Transformer projects Queries, Keys, and Values into h different learned subspaces in parallel. Each head can specialize — one might track syntactic dependencies, another semantic similarity, another coreference. Their outputs are concatenated and re-projected.

where headi = Attention(QWiQ, KWiK, VWiV)

Each projection matrix Wi is learned independently, giving the model rich expressivity across diverse relational patterns simultaneously.

Attention Variants

Self-Attention

Q, K, V all come from the same sequence. Each token attends to every other token within the same layer — the backbone of encoder representations.

Cross-Attention

Q comes from the decoder, while K and V come from the encoder output. Allows the decoder to selectively focus on relevant encoder states during generation.

Masked Self-Attention

Used in decoder blocks. The attention matrix is masked so each position can only attend to previous positions — enforcing auto-regressive generation.

Flash Attention

An IO-aware exact attention algorithm that tiles computation to reduce HBM reads/writes — enabling training on much longer sequences efficiently.

Positional Information

Positional Encodings

Self-attention is inherently permutation-equivariant — it has no built-in notion of order. Positional encodings inject sequence position information into the token embeddings before they enter the attention layers.

The original paper used fixed sinusoidal encodings, which generalize to unseen sequence lengths. Modern variants include learned absolute positions, relative position biases (ALiBi, T5 bias), and Rotary Position Embeddings (RoPE) that encode position directly into the Q·K interaction.

PE(pos, 2i+1) = cos( pos / 100002i / dmodel )

Architecture Walkthrough

The Encoder–Decoder Stack

The original Transformer pairs an encoder (bidirectional) with an auto-regressive decoder. Each sub-layer is wrapped with a residual connection and layer normalization.

-

01Input Embedding + Positional Encoding

Tokens are mapped to dense vectors of dimension dmodel. Positional encodings are added element-wise to inject order information.

-

02Multi-Head Self-Attention

Each encoder layer applies multi-head self-attention, followed by Add & Norm (residual connection + layer normalization).

-

03Position-wise Feed-Forward Network

A two-layer MLP (with ReLU or GeLU activation) applied independently to each token position. Expands to 4× dmodel then projects back. Another Add & Norm follows.

-

04Decoder: Masked Self-Attention + Cross-Attention

The decoder adds a masked self-attention layer (causal) followed by cross-attention over encoder outputs, allowing generation conditioned on the full input.

-

05Linear Projection + Softmax

The final decoder state is projected to vocabulary size and passed through softmax to produce a probability distribution over the next token.

Why Transformers Dominate

O(1) Path Length

Any two tokens are connected by a direct attention path regardless of distance — no vanishing gradient across sequence length as in RNNs.

Hardware Friendly

Self-attention reduces to matrix multiplications — perfectly suited for modern GPU/TPU tensor cores, enabling extraordinary scale.

Transfer Learning

Pre-train once on massive corpora; fine-tune on downstream tasks. Rich contextual representations transfer across domains remarkably well.

Scaling Laws

Performance improves predictably with model size, data, and compute — enabling deliberate investment in capability through scale.

Landmark Models

The Family Tree

-

BERTEncoder-only · Bidirectional · 2018

Trained with masked language modeling and next-sentence prediction. Dominant for classification and understanding tasks.

-

GPTDecoder-only · Auto-regressive · 2018–present

Causal language modeling at enormous scale. GPT-2, GPT-3, GPT-4 demonstrated emergent capabilities beyond simple language modeling.

-

T5Encoder–Decoder · Text-to-Text · 2019

Reframed every NLP task as text generation. Introduced the T5 framework for unified multi-task learning.

-

ViTVision Transformer · Image Patches · 2020

Proved transformers work for vision by treating image patches as tokens — displacing CNNs in large-scale image recognition.