Artificial Intelligence · Conceptual Primer

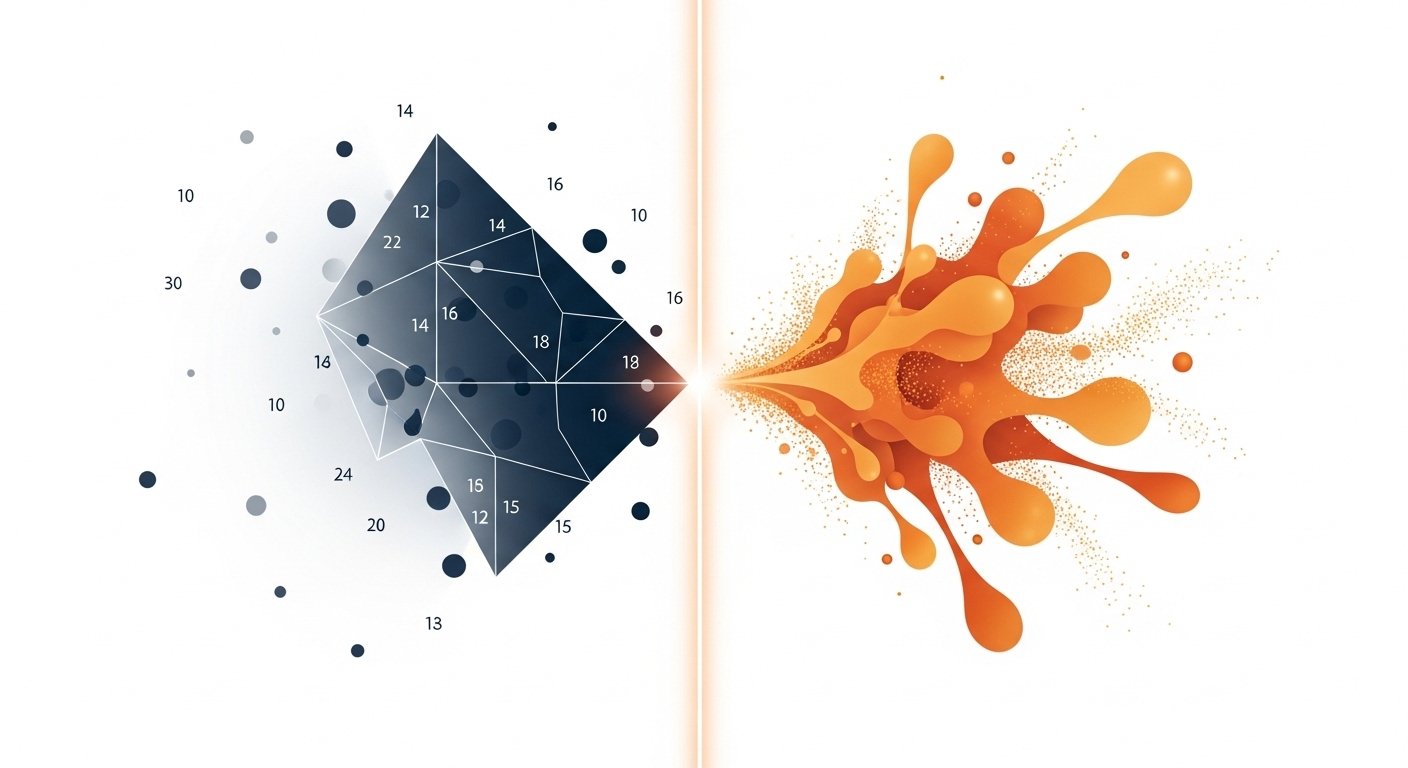

Discriminative

vs Generative

Models

Two philosophies of machine learning — one learns to decide, the other learns to create. Understanding the distinction unlocks how modern AI really works.

The Core Distinction

What separates them?

At the heart of machine learning lies a fundamental fork: should a model learn boundaries between things, or learn what things actually look like? This single question divides the entire landscape into two families.

“Discriminative models ask which box does this belong in? Generative models ask what does a member of this box look like?“

Learns the boundary

Models the conditional probability P(y | x) — given input x, what is the most likely label y? It draws decision boundaries directly in input space without ever modelling the data itself.

Learns the distribution

Models the joint probability P(x, y) or the data distribution P(x). By understanding the full landscape of what data looks like, it can classify — and also create.

Under the Hood

The probabilistic view

Both families ultimately deal in probability, but they model very different quantities. The math reveals why generative models are more powerful — and also harder to train.

Head to Head

Key distinctions at a glance

| Dimension | Discriminative | Generative |

|---|---|---|

| Primary goal | Classify or predict labels | Model the data distribution |

| What it learns | Decision boundary P(y | x) | Data density P(x) or P(x, y) |

| Can generate new data? | No | Yes |

| Typical training data need | Less — focuses on boundaries | More — must model everything |

| Handles missing features? | Poorly — requires full input | Naturally — can impute |

| Interpretability | Often higher for simple models | Varies; latent spaces complex |

| Training complexity | Generally lower | Generally higher |

| Outlier / anomaly detection | Indirect, less natural | Native via low-density regions |

Model Families

Recognisable examples

Nearly every model you’ve encountered falls into one of these two camps — sometimes models straddle both.

Classic examples

Logistic Regression · Support Vector Machines (SVMs) · Decision Trees · Random Forests · Conditional Random Fields (CRFs) · Traditional Neural Classifiers (e.g. ResNet for ImageNet)

Classic examples

Naïve Bayes · Hidden Markov Models · Variational Autoencoders (VAEs) · Generative Adversarial Networks (GANs) · Diffusion Models · Large Language Models (GPT, Claude)

Applications

Where each shines

Discriminative models excel at

Generative models excel at

The Bigger Picture

A spectrum, not a binary

Modern AI increasingly blurs the line. Discriminatively fine-tuned generative models (like instruction-tuned LLMs) combine both philosophies: they learn rich world representations generatively, then are steered toward specific tasks discriminatively. Semi-supervised and self-supervised methods use generative pretraining to supercharge discriminative downstream performance.

Understanding which regime a model operates in tells you what it fundamentally can and cannot do — making you a sharper practitioner regardless of the tools you reach for.

A conceptual overview · Concepts apply across supervised, unsupervised, and semi-supervised learning paradigms · 2025