Managing Memory in Conversational AI Agents

A comprehensive guide to how intelligent agents encode, retrieve, and maintain context across conversations — from sliding windows to hybrid retrieval architectures.

Why Memory Matters

The challenge of memory in AI systems mirrors the challenge of memory in the human mind: how do you preserve what is relevant, discard what is noise, and retrieve the right information at the right time — all within finite cognitive resources?

For conversational AI, the stakes are practical and immediate. A customer support agent that forgets a user’s name mid-conversation; a coding assistant that loses track of a codebase after ten exchanges; a medical chatbot that cannot recall a patient’s stated symptoms — these failures erode trust and usefulness.

Modern LLM agents address this through layered memory architectures, each layer trading off speed, capacity, fidelity, and cost. Understanding these trade-offs is foundational to building agents that feel genuinely intelligent and coherent over time.

“Memory is not a storage problem. It is a relevance problem — knowing what to keep, what to compress, and what to let go.”

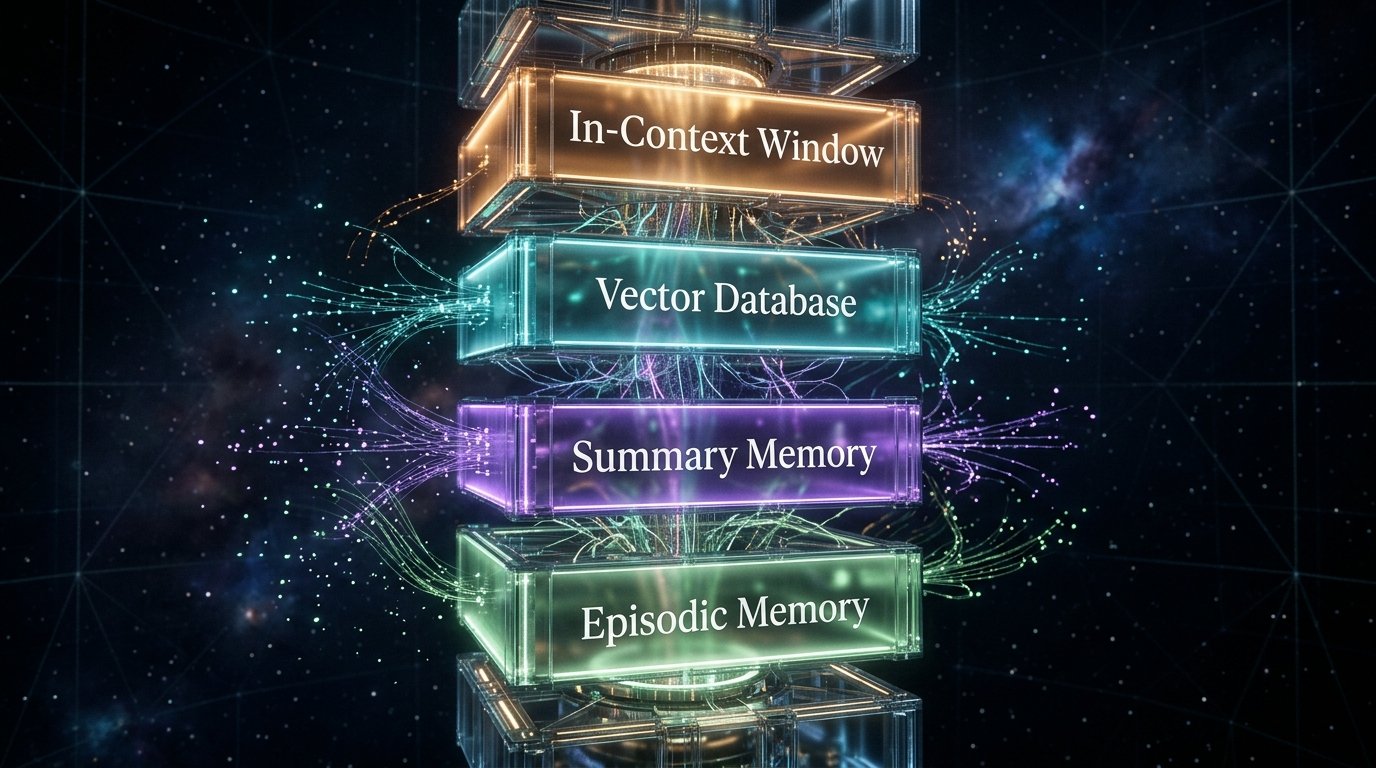

Core Principle of Agentic Memory DesignThe Four Memory Paradigms

Working Memory

( In-Context Window )

The active context window of the model — typically ranging from 4K to 1M+ tokens depending on the model. Everything the model “knows” in a given inference call lives here. It is fast, lossless, and transient: when the conversation ends, it evaporates.

Token efficiency is the critical concern. Every system prompt, tool description, retrieved document, and prior message competes for this precious finite resource.

Immediate Access Token-LimitedExternal Memory

( Vector Databases )

Documents, conversation logs, user profiles, and knowledge chunks stored outside the model as vector embeddings. Retrieval is semantic — searching by meaning rather than keyword. Systems like Pinecone, Weaviate, Chroma, and pgvector power this layer.

The retrieval step adds latency and introduces approximation errors. The quality of embedding models, chunk size, and similarity thresholds directly determine recall accuracy.

Scalable Semantic SearchSummary Memory

( Compressed Long-Term )

Rather than storing raw conversation history, the agent periodically summarizes prior exchanges into condensed narratives using an LLM. These summaries are injected back into future context windows as a compact “memory digest.”

Lossy by nature — nuanced details and exact phrasings are discarded. But for most use cases, the core thread of meaning is preserved at a fraction of the token cost.

Token-Efficient LossyEpisodic Memory

( Event-Tagged Recall )

Discrete memory events — timestamped, tagged by entity, topic, and emotional valence — stored in structured databases. The agent can query: “What did the user say about X last Tuesday?” or “Which past conversation involved billing disputes?”

Requires careful schema design and entity extraction pipelines. Often combined with external vector memory for hybrid recall: structured filtering + semantic matching.

Temporal QueryableRetrieval & Management Strategies

Sliding Window Truncation

The simplest strategy: keep only the N most recent turns within the context window and discard everything older. Fast, deterministic, and trivially implemented. Works well for short-horizon tasks.

- Zero latency overhead

- Fully deterministic

- No external dependencies

- Loses all early context

- No long-term coherence

- Abrupt memory cutoff

Progressive Summarization

When the conversation buffer approaches its limit, an LLM call compresses the oldest N turns into a narrative summary. The summary replaces the raw turns, and the fresh end of the conversation remains intact. Popularized by MemGPT and similar frameworks.

- Retains long-term narrative

- Scales to very long sessions

- Human-readable memory state

- LLM call adds latency

- Lossy compression

- Summarization quality varies

Retrieval-Augmented Generation (RAG)

The dominant paradigm for long-term memory. Conversation history, documents, and user data are embedded and stored in a vector store. At inference time, the agent queries the store with the current user message and injects the top-k retrieved chunks into context. Relevance is determined by cosine similarity of embedding vectors.

- Scales to millions of memories

- Semantic recall by meaning

- Memory persists across sessions

- Retrieval latency (50–300ms)

- Embedding quality dependency

- False positives from similarity

Hierarchical Memory with Tiering

Memory is organized into hot (in-context), warm (compressed summaries), and cold (vector database) tiers. Information cascades from hot to cold over time, with importance scoring determining retention priority at each tier transition. Analogous to CPU cache hierarchy.

- Optimal token efficiency

- Preserves high-signal memories

- Graceful degradation

- Complex to implement

- Importance scoring is hard

- Multiple failure surfaces

Entity & Knowledge Graph Memory

Instead of storing raw text, the agent extracts structured facts about entities (users, products, events) and stores them in a knowledge graph (Neo4j, Memgraph, or similar). Retrieval operates on graph traversal: “find all facts about this user and their relationships.” Excellent for factual consistency across long horizons.

- Highly factually consistent

- Explicit relationship reasoning

- No embedding drift

- Schema design overhead

- Entity extraction errors

- Misses unstructured nuance

Hybrid: RAG + Summary + Entity

Production-grade memory systems combine all three. A short-term window holds recent turns. A summary digest covers the session arc. A vector store covers multi-session recall. An entity store holds persistent user facts. Routing logic decides which layer to query based on the nature of the user’s intent.

- Best of all worlds

- Robust to any horizon

- Graceful fallback chain

- High operational complexity

- Multiple infra dependencies

- Orchestration latency

// Hybrid memory retrieval — production pattern async function retrieveMemory(query: string, userId: string) { const [vectorResults, entityFacts, sessionSummary] = await Promise.all([ vectorStore.search(query, { topK: 5, filter: { userId } }), entityGraph.query(`MATCH (u:User {id: $userId})-[*1..2]-(n) RETURN n`, { userId }), summaryStore.get(userId) ]); // Score and merge: entity facts take priority over semantic similarity const merged = mergeWithPriority({ entityFacts, // weight: 1.0 — hard facts sessionSummary, // weight: 0.8 — narrative arc vectorResults // weight: 0.6 — semantic context }, maxTokens: 2048); return merged; }

Core Challenges in Agent Memory

The Token Budget Problem

Every token in context costs money and latency. The fundamental tension: more memory context means better coherence but higher costs, slower inference, and eventual hard limits — even with 1M-token windows.

Retrieval Precision vs. Recall

Semantic search retrieves what is similar, not what is relevant. A high-recall retriever floods context with noise. A high-precision retriever misses subtle but crucial memories. Tuning this balance is an art.

Temporal Consistency

Memories age. A fact true three sessions ago may be false now. Memory systems need expiry, versioning, and conflict resolution to avoid serving stale or contradictory information into context.

Privacy & Data Isolation

Persistent memory is a security surface. User A’s memories must never contaminate User B’s context. Multi-tenant vector stores require strict namespace isolation and row-level security at retrieval time.

Catastrophic Forgetting

When fine-tuned to remember new information, models tend to overwrite prior knowledge. This is distinct from context-level memory but increasingly relevant as agents use continual learning loops.

Memory Hallucination

Agents can fabricate “memories” — confidently asserting things they were never told. Without grounding retrieval results against verified sources, false memories compound and corrupt agent reliability.

Reference Architecture

A production-ready conversational AI agent orchestrates memory across multiple layers, with a routing layer deciding what to retrieve, when to summarize, and what to persist.

Engineering Best Practices

-

Design memory budgets before writing code

Before any implementation, establish explicit token budgets for each memory layer. Define what percentage of context goes to system prompt, retrieved memories, conversation history, and the current user turn. Violating this budget silently degrades performance — enforce it programmatically at the orchestration layer.

-

Use importance scoring for tiering decisions

Not all memories deserve equal retention. Train or prompt a classifier to score memory importance on dimensions like recency, user preference signal, factual specificity, and emotional salience. Use these scores to decide what gets summarized, what gets kept verbatim, and what gets evicted to cold storage.

-

Store embeddings with rich metadata

A vector embedding alone is insufficient for production retrieval. Always store alongside it: the user ID, session ID, timestamp, memory type, entity tags, and confidence score. This enables hybrid retrieval (vector similarity + structured filtering) which dramatically improves precision.

-

Implement memory versioning and conflict resolution

Users change their minds. Facts become stale. Build a versioning layer that timestamps all memory writes and implements a “latest-wins” or “consensus” conflict resolution strategy. Provide an audit trail so the agent can reason about why it knows something and when it learned it.

-

Evaluate retrieval quality independently

Memory retrieval is a separate subsystem from generation. Evaluate it separately using retrieval benchmarks: Recall@K, Mean Reciprocal Rank (MRR), and latency at P99. Do not rely solely on end-to-end RLHF scores — they mask retrieval failures behind fluent generation.

-

Give users control over their memory

Trust requires transparency. Expose a user-facing memory viewer so users can inspect what the agent remembers about them, correct errors, and delete sensitive memories. Beyond being good UX, this is increasingly a regulatory requirement under GDPR and similar privacy frameworks.

-

Cache expensive memory retrievals aggressively

For high-traffic agents, memory retrieval at every turn is prohibitively expensive. Use a session-level cache keyed on (userId, sessionId) that is invalidated only when new high-importance memories are written. For most turns, the cached memory context is sufficient and retrieval can be skipped entirely.

-

Test memory under long-horizon adversarial scenarios

Memory systems fail gracefully in demos and catastrophically in production. Write integration tests that simulate 100-turn conversations with contradictory user inputs, long gaps between sessions, and adversarial attempts to inject false memories. Measure coherence drift over these extended test cases.