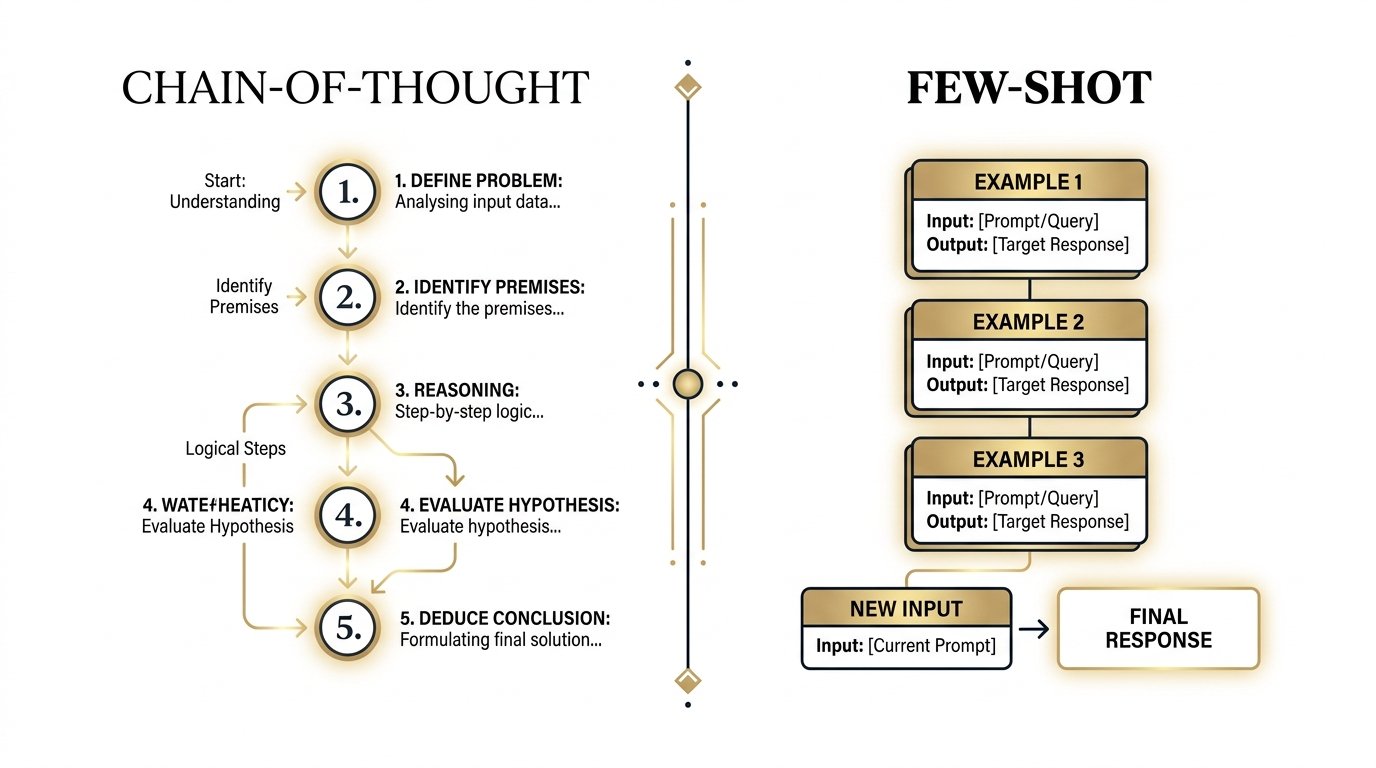

Chain-of-Thought

& Few-Shot Prompting

Two foundational techniques that transform how large language models reason, generalise, and produce reliable, structured responses.

Few-Shot Prompting

Few-shot prompting teaches a model by demonstration rather than description. Instead of explaining the task in abstract terms, you provide a small number of fully-worked input–output examples directly in the prompt. The model infers the pattern and applies it to new inputs.

This is powerful because LLMs are trained to complete sequences. By prepending examples, you effectively condition the model’s generation process on a concrete behavioural template.

# System context (optional) System: Classify the sentiment of each review as Positive, Negative, or Neutral. # Example 1 Input: "The delivery was fast and the packaging was pristine." → Output: Positive # Example 2 Input: "It works, I suppose. Nothing special." → Output: Neutral # Example 3 Input: "Broke after two days. Complete waste of money." → Output: Negative # New query — model completes the pattern Input: "Exceeded every expectation. Already ordered a second one." → Output: ← model generates this

Quality Over Quantity

Research shows diminishing returns beyond 8–16 examples for most tasks. Three to five well-chosen, diverse, and correctly-formatted exemplars almost always outperform ten mediocre ones. Prioritise coverage of edge cases over raw volume.

Chain-of-Thought Prompting

Chain-of-Thought (CoT) prompting elicits step-by-step reasoning from a model before it commits to a final answer. Rather than jumping to a conclusion, the model is guided — either by examples or by a simple instruction — to externalise its intermediate reasoning process.

This dramatically improves performance on tasks requiring multi-step arithmetic, logical inference, commonsense reasoning, and symbolic manipulation, because errors in intermediate steps become visible and correctable.

User: A store sells apples for $0.50 each and oranges for $0.75 each. Maria buys 6 apples and 4 oranges. She pays with a $10 bill. How much change does she receive? Think through this step by step before giving your answer. Model: Step 1 — Cost of apples: 6 × $0.50 = $3.00 Step 2 — Cost of oranges: 4 × $0.75 = $3.00 Step 3 — Total cost: $3.00 + $3.00 = $6.00 Step 4 — Change: $10.00 − $6.00 = $4.00 Answer: Maria receives $4.00 in change.

Zero-Shot CoT — The Magic Phrase

Simply appending “Think step by step” or “Let’s reason through this carefully” to a prompt reliably triggers chain-of-thought behaviour in capable models, with no examples required. Discovered by Kojima et al., 2022.

Few-Shot CoT — Reasoning by Example

Combine both techniques: provide 2–4 examples where each example includes the full reasoning trace alongside the correct answer. The model learns both what to conclude and how to get there.

Self-Consistency — Sample & Vote

Generate multiple independent CoT chains for the same question, then select the most frequently occurring final answer. This ensemble approach significantly reduces variance on complex reasoning benchmarks.

Tree-of-Thought (ToT) — Branching Reasoning

An extension where the model explores multiple reasoning branches simultaneously, evaluates each branch’s promise, and backtracks when a path fails. Particularly effective for planning, search, and puzzle-solving tasks.

Few-Shot + CoT Combined

The most robust approach for complex tasks: provide 3–5 examples that each demonstrate explicit step-by-step reasoning, followed by the new query with an instruction to reason before answering. This leverages the complementary strengths of both techniques simultaneously.

Choosing the Right Strategy

The two techniques are complementary, not competing. Your choice depends on the nature of the task, token budget, and whether you need the model to produce structured output or demonstrate auditable reasoning.

Task type → Recommended strategy ────────────────────────────────────────────────────────── Format/schema output → Few-Shot (show exact structure) Multi-step arithmetic → CoT (externalise calculation) Classification → Few-Shot (define label vocabulary) Logical inference → CoT or Few-Shot CoT Complex reasoning → Few-Shot CoT + Self-Consistency Creative generation → Few-Shot (style anchoring) Planning / puzzles → Tree-of-Thought Simple Q&A → Zero-Shot (CoT optional)

Best Practice: Always test zero-shot first as your baseline. Add few-shot examples when consistency or format compliance is low. Layer in chain-of-thought reasoning when accuracy on multi-step tasks remains insufficient. Reserve self-consistency sampling for high-stakes outputs where correctness matters more than latency.