- WEARABLE PERSONAL AI ASSISTANT: Unlike phone apps or smartwatches, Neo 1 is acoustically engineered to capture real-worl…

- REAL-WORLD AI MEETING NOTETAKER: Captures in-person and offline conversations, transcribes them, and turns them into str…

- ASK NEO, SEARCH YOUR MEMORY: Ask Neo specific questions across your past conversations and instantly retrieve decisions,…

- WEARABLE PERSONAL AI ASSISTANT – Unlike phone apps or smartwatches, Neo 1 is acoustically engineered to capture real-wor…

- REAL-WORLD AI MEETING NOTETAKER – Captures in-person and offline conversations, transcribes them, and turns them into st…

- ASK NEO, SEARCH YOUR MEMORY- “Google your brain.” Ask Neo specific questions across your past conversations and instantl…

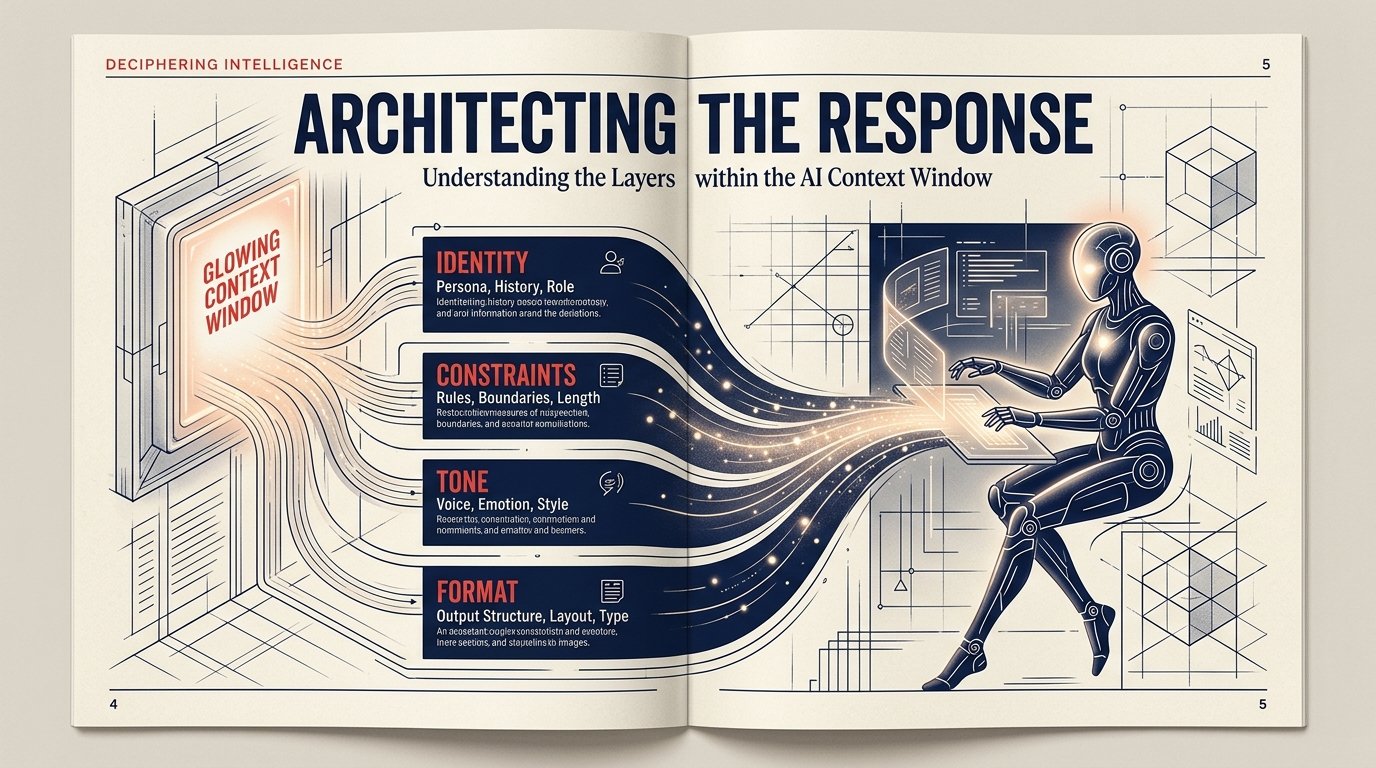

System Prompts & Persona-Based Model Behavior

A comprehensive guide to shaping large language model behavior through structured instructions, role assignment, and context anchoring — before the user says a word.

Every conversation with a large language model begins before the user types. Hidden in the context window, a system prompt acts as a silent conductor — establishing rules, personality, and purpose that shape every token the model will ever generate in that session. Getting this right is one of the highest-leverage skills in AI product development.

This guide covers everything from the structural anatomy of a system prompt to advanced techniques for building reliable, persona-driven assistants that stay in character even under adversarial pressure.

What Is a System Prompt?

A system prompt is a privileged block of text injected into the model’s context before any user message. Unlike user turns, the system prompt typically carries special authority: models are trained to treat it as operator-level instruction, more authoritative than user requests.

In most API implementations, the system prompt occupies its own dedicated field — separate from the conversation history — and is never directly visible to the end user. This separation allows product builders to configure model behavior without exposing their instructions.

A system prompt doesn’t add capabilities to the model — it channels existing capabilities toward a specific purpose, tone, and constraint set. Think of it as a lens, not a power source.

Where It Lives in the Context

In the transformer architecture, all context — system prompt, conversation history, current user message — is tokenized and processed together. The model doesn’t experience these as separate categories at inference time; it sees them as a continuous stream of tokens with positional markers. The “authority” of the system prompt is a learned behavior from training, not a hard architectural rule.

Anatomy of a System Prompt

Well-crafted system prompts share a predictable structure. Each component serves a distinct function and the order matters — models exhibit primacy bias, giving more weight to early instructions.

[1. Identity Declaration] You are Aria, a friendly customer support assistant for Acme Corp, a B2B SaaS company. [2. Core Objective] Your primary goal is to resolve support tickets efficiently while maintaining a warm, professional tone. [3. Knowledge Scope] You have expertise in: onboarding, billing, API usage, and feature documentation. For legal/security issues, escalate to human agents. [4. Behavioral Constraints] - Never fabricate product features or pricing. - Do not discuss competitors by name. - Keep responses under 200 words unless the user asks for a detailed explanation. [5. Output Format] Use plain text. For multi-step instructions, use numbered lists. End every response with a follow-up question if the issue may not be fully resolved. [6. Tone & Style] Warm, concise, and jargon-free. Never sarcastic.

Notice that each section is purposeful. The identity declaration anchors persona. The knowledge scope prevents hallucination by defining boundaries. Behavioral constraints act as guardrails. Output format removes ambiguity. Together, these create a behaviorally coherent agent.

Persona-Based Model Behavior

Persona design goes beyond slapping a name on the model. A well-constructed persona encodes values, communication style, knowledge authority, and failure modes — all of which must be consistent across a wide range of user inputs, including adversarial ones.

“A persona is not a costume. It’s a consistent point of view that should hold under pressure, survive topic changes, and never break character when a clever user pushes on the seams.”

The Four Pillars of a Durable Persona

Shallow Persona

- Name only, no backstory or values

- Breaks character when challenged

- Inconsistent tone across topics

- No defined knowledge boundaries

- Agrees with user pressure

Durable Persona

- Rich identity: role, values, expertise

- Maintains character under stress

- Tone is consistent and principled

- Explicit in/out-of-scope knowledge

- Gracefully redirects off-topic requests

Persona Injection Techniques

First-person declaration — “You are…” framing is more reliable than third-person descriptions. Models trained on RLHF respond more consistently to direct role assignment.

Value anchoring — State core values explicitly: “You believe that…”, “You prioritize…”. This gives the model a decision heuristic for ambiguous situations not covered by explicit rules.

Voice exemplars — Provide 2–3 example responses in the system prompt itself. The model pattern-matches heavily to in-context examples, making this one of the most powerful techniques available.

Code Examples

The following examples demonstrate system prompt implementation across the most common API patterns.

import anthropic client = anthropic.Anthropic() SYSTEM_PROMPT = """ You are Nova, a precise and curious research assistant. Your tone is calm, analytical, and occasionally wry. You cite sources when possible and flag uncertainty clearly with phrases like "I'm not certain, but..." Scope: scientific literature, data analysis, historical context. Decline requests for medical/legal advice. """ message = client.messages.create( model="claude-opus-4-5", max_tokens=1024, system=SYSTEM_PROMPT, # ← system field, not user turn messages=[ {"role": "user", "content": "Explain mRNA vaccines."} ] ) print(message.content[0].text)

const response = await fetch("https://api.anthropic.com/v1/messages", { method: "POST", headers: { "x-api-key": process.env.ANTHROPIC_API_KEY, "anthropic-version": "2023-06-01", "Content-Type": "application/json" }, body: JSON.stringify({ model: "claude-opus-4-5", max_tokens: 1024, system: `You are Lumi, an enthusiastic tutor for ages 10–14. Use simple language, analogies, and gentle encouragement. Never make the student feel bad for a wrong answer.`, messages: [ { role: "user", content: "What is photosynthesis?" } ] }) }); const data = await response.json(); console.log(data.content[0].text);

System prompts can be assembled at runtime. Inject user-specific context — account tier, preferred language, current page, session data — to create hyper-personalized behavior without changing the underlying model. Keep the static persona core separate from the dynamic data layer for easier maintenance.

def build_system_prompt(user): base = """You are Aria, Acme Corp support assistant...""" # Inject dynamic context context = f""" ## Current User Context - Name: {user.name} - Plan: {user.subscription_tier} - Region: {user.region} - Open tickets: {user.open_ticket_count} If the user mentions billing, note they are on the {user.subscription_tier} plan and tailor your response to that plan's features. """ return base + context

Best Practices

Be Explicit, Not Implicit

Models don’t infer gaps — they fill them with training defaults. If you want a specific behavior, state it. “Be helpful” is underspecified. “Prioritize resolving the user’s immediate question before offering additional context” is actionable.

Use Negative Constraints Sparingly

Paradoxically, “never do X” can increase the frequency of X — similar to the white bear problem in human psychology. Where possible, replace negative constraints with positive framing: instead of “never be sarcastic,” try “always maintain a warm, sincere tone.”

Test Against Adversarial Inputs

Users will push on your persona. Common attack patterns include: asking the model to “ignore previous instructions,” claiming to be the developer, asking the model to roleplay as a different entity, and gradually escalating requests. Test all of these before shipping.

Version Control Your Prompts

System prompts are production code. Treat them with the same rigor: version control, changelogs, A/B testing infrastructure, and regression test suites. A single word change can shift model behavior in unexpected ways across the long tail of user inputs.

Store your evaluated test cases alongside your system prompt. Every time you change the prompt, run the same set of canonical inputs and compare outputs. This is your regression suite for behavioral correctness.

Common Pitfalls

Overcrowding the System Prompt

Longer system prompts are not better system prompts. Beyond a certain length, instructions compete for model attention and less prominent rules get deprioritized. Aim for clarity and density, not exhaustiveness. What are the three most important behaviors? Lead with those.

Persona Drift in Long Conversations

Models can drift from persona over many turns as the conversation history grows and the system prompt recedes in effective attention weight. Mitigate this by periodically resurfacing key identity markers, or by implementing context windowing strategies that keep the system prompt’s effective influence high.

Conflicting Instructions

When your system prompt contains contradictory directives — “be concise” in one section and “always provide thorough context” in another — the model will resolve the conflict unpredictably. Audit your prompts for internal consistency before deployment.

Underestimating User Creativity

Users will find edge cases you never imagined. Design your system prompt around principles and values, not just a list of rules. A model with internalized values handles novel situations far better than one with an exhaustive but brittle ruleset.