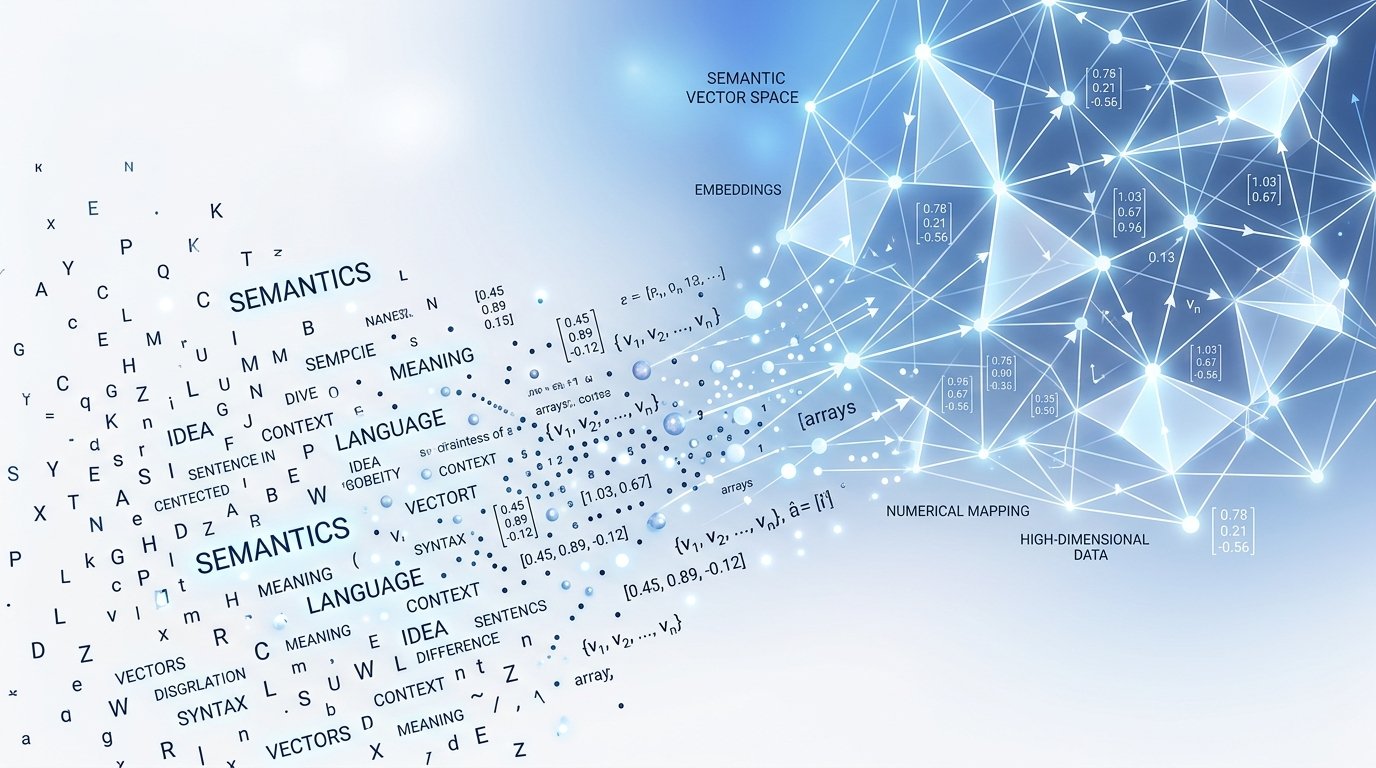

Embedding techniques

🧠 What are text embeddings?

Embeddings are dense numerical representations of text that capture semantic meaning. Instead of treating words as isolated tokens, embedding techniques map sentences, paragraphs, or documents into high-dimensional vector spaces — where similar meanings cluster together. This enables machines to “understand” context, calculate similarity, and power search, clustering, and LLMs.

⚙️ Core embedding techniques

🗂️ Bag-of-Words

Count-based sparse vectors. Simple but loses order & semantics. Great baseline for numeric transformation.

🔤 TF-IDF

Term frequency–inverse document frequency. Weighs rare words higher. Sparse, interpretable, still widely used.

🎯 Word2Vec

Dense neural embeddings (CBOW/Skip-gram). Captures syntactic & semantic relationships using shallow networks.

🌊 GloVe

Global Vectors — counts co-occurrence matrix + factorization. Combines statistics with meaning.

🚀 BERT / Transformers

Contextual embeddings (attention-based). Each token vector changes depending on surrounding words — state of the art.

🧪 Live demo: text → numerical vector

Write any sentence, and see how embeddings transform unstructured text into numeric data. We simulate a dense embedding using a fast conceptual model (normalized TF + hashed n-grams) that produces a 16‑dimension vector — illustrating the core idea of mapping text to numeric arrays.

* Demo embedding combines character trigrams, hash encoding and L2 normalization — mimics dense representation behavior. Real embeddings (e.g., BERT) produce high‑dim vectors with semantic coherence.

🌊 From text to numbers: why it matters

📘 Quick comparison: sparse vs dense

| Bag-of-Words / TF-IDF | Sparse, high-dimensional, interpretable, no semantics beyond term frequency. |

| Word2Vec / GloVe | Dense, lower-dim (100-300), captures analogies, static embeddings. |

| BERT / Sentence Transformers | Contextual dense vectors, state-of-the-art, dynamic per sentence, 384–1024 dims. |